Independent and Dependent Variables

Saul McLeod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul McLeod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Learn about our Editorial Process

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

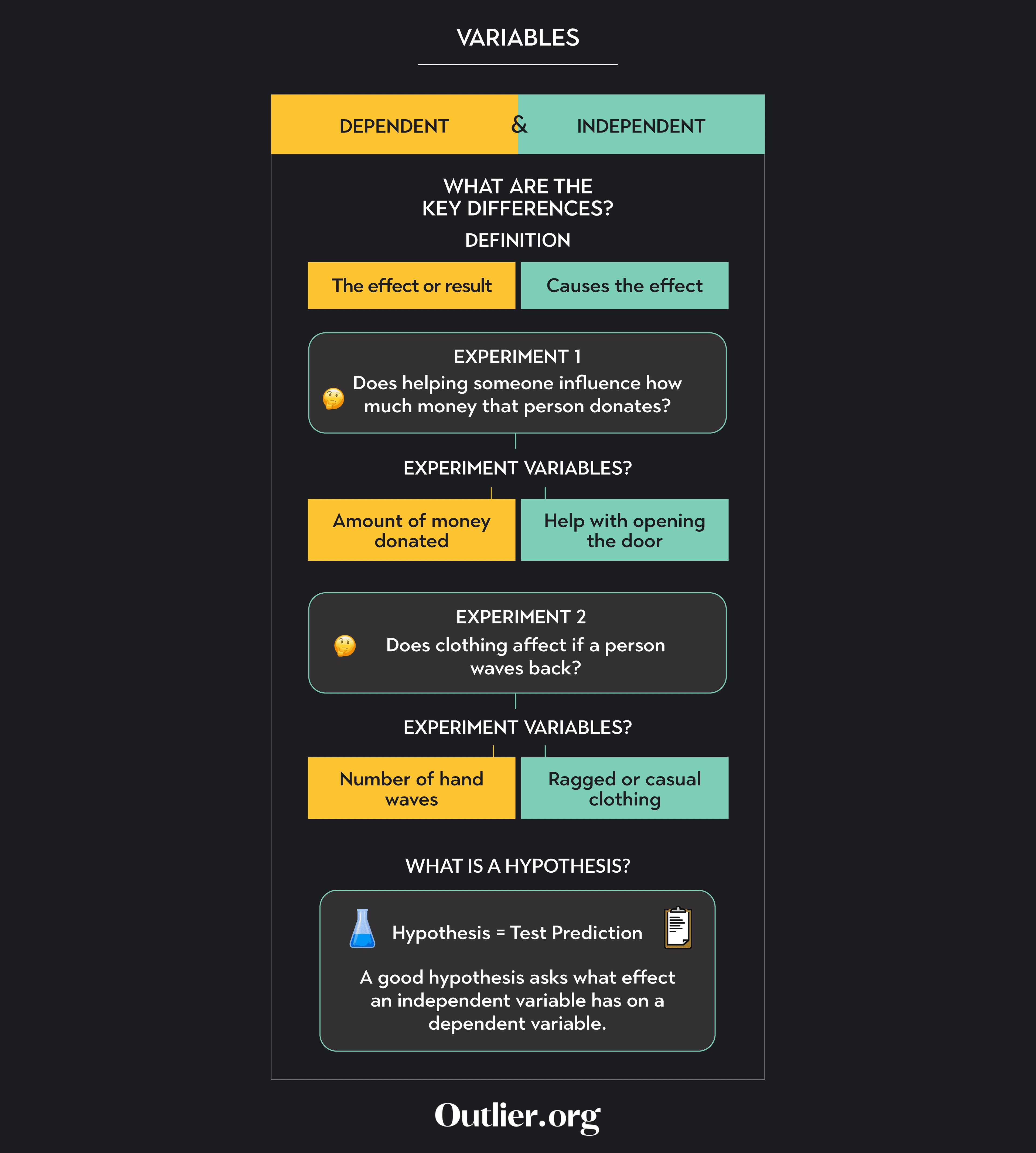

In research, a variable is any characteristic, number, or quantity that can be measured or counted in experimental investigations . One is called the dependent variable, and the other is the independent variable.

In research, the independent variable is manipulated to observe its effect, while the dependent variable is the measured outcome. Essentially, the independent variable is the presumed cause, and the dependent variable is the observed effect.

Variables provide the foundation for examining relationships, drawing conclusions, and making predictions in research studies.

Independent Variable

In psychology, the independent variable is the variable the experimenter manipulates or changes and is assumed to directly affect the dependent variable.

It’s considered the cause or factor that drives change, allowing psychologists to observe how it influences behavior, emotions, or other dependent variables in an experimental setting. Essentially, it’s the presumed cause in cause-and-effect relationships being studied.

For example, allocating participants to drug or placebo conditions (independent variable) to measure any changes in the intensity of their anxiety (dependent variable).

In a well-designed experimental study , the independent variable is the only important difference between the experimental (e.g., treatment) and control (e.g., placebo) groups.

By changing the independent variable and holding other factors constant, psychologists aim to determine if it causes a change in another variable, called the dependent variable.

For example, in a study investigating the effects of sleep on memory, the amount of sleep (e.g., 4 hours, 8 hours, 12 hours) would be the independent variable, as the researcher might manipulate or categorize it to see its impact on memory recall, which would be the dependent variable.

Dependent Variable

In psychology, the dependent variable is the variable being tested and measured in an experiment and is “dependent” on the independent variable.

In psychology, a dependent variable represents the outcome or results and can change based on the manipulations of the independent variable. Essentially, it’s the presumed effect in a cause-and-effect relationship being studied.

An example of a dependent variable is depression symptoms, which depend on the independent variable (type of therapy).

In an experiment, the researcher looks for the possible effect on the dependent variable that might be caused by changing the independent variable.

For instance, in a study examining the effects of a new study technique on exam performance, the technique would be the independent variable (as it is being introduced or manipulated), while the exam scores would be the dependent variable (as they represent the outcome of interest that’s being measured).

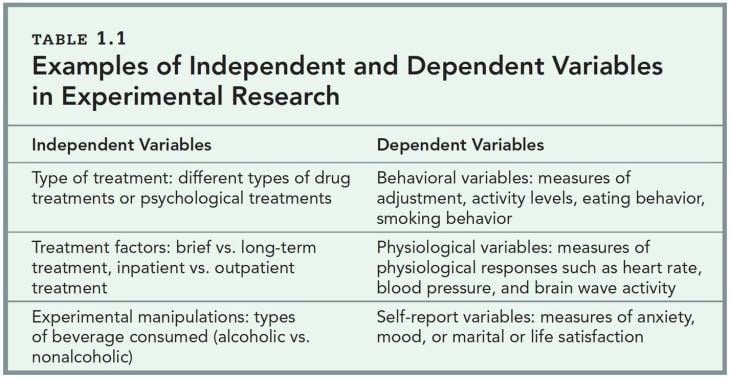

Examples in Research Studies

For example, we might change the type of information (e.g., organized or random) given to participants to see how this might affect the amount of information remembered.

In this example, the type of information is the independent variable (because it changes), and the amount of information remembered is the dependent variable (because this is being measured).

For the following hypotheses, name the IV and the DV.

1. Lack of sleep significantly affects learning in 10-year-old boys.

IV……………………………………………………

DV…………………………………………………..

2. Social class has a significant effect on IQ scores.

DV……………………………………………….…

3. Stressful experiences significantly increase the likelihood of headaches.

4. Time of day has a significant effect on alertness.

Operationalizing Variables

To ensure cause and effect are established, it is important that we identify exactly how the independent and dependent variables will be measured; this is known as operationalizing the variables.

Operational variables (or operationalizing definitions) refer to how you will define and measure a specific variable as it is used in your study. This enables another psychologist to replicate your research and is essential in establishing reliability (achieving consistency in the results).

For example, if we are concerned with the effect of media violence on aggression, then we need to be very clear about what we mean by the different terms. In this case, we must state what we mean by the terms “media violence” and “aggression” as we will study them.

Therefore, you could state that “media violence” is operationally defined (in your experiment) as ‘exposure to a 15-minute film showing scenes of physical assault’; “aggression” is operationally defined as ‘levels of electrical shocks administered to a second ‘participant’ in another room.

In another example, the hypothesis “Young participants will have significantly better memories than older participants” is not operationalized. How do we define “young,” “old,” or “memory”? “Participants aged between 16 – 30 will recall significantly more nouns from a list of twenty than participants aged between 55 – 70” is operationalized.

The key point here is that we have clarified what we mean by the terms as they were studied and measured in our experiment.

If we didn’t do this, it would be very difficult (if not impossible) to compare the findings of different studies to the same behavior.

Operationalization has the advantage of generally providing a clear and objective definition of even complex variables. It also makes it easier for other researchers to replicate a study and check for reliability .

For the following hypotheses, name the IV and the DV and operationalize both variables.

1. Women are more attracted to men without earrings than men with earrings.

I.V._____________________________________________________________

D.V. ____________________________________________________________

Operational definitions:

I.V. ____________________________________________________________

2. People learn more when they study in a quiet versus noisy place.

I.V. _________________________________________________________

D.V. ___________________________________________________________

3. People who exercise regularly sleep better at night.

Can there be more than one independent or dependent variable in a study?

Yes, it is possible to have more than one independent or dependent variable in a study.

In some studies, researchers may want to explore how multiple factors affect the outcome, so they include more than one independent variable.

Similarly, they may measure multiple things to see how they are influenced, resulting in multiple dependent variables. This allows for a more comprehensive understanding of the topic being studied.

What are some ethical considerations related to independent and dependent variables?

Ethical considerations related to independent and dependent variables involve treating participants fairly and protecting their rights.

Researchers must ensure that participants provide informed consent and that their privacy and confidentiality are respected. Additionally, it is important to avoid manipulating independent variables in ways that could cause harm or discomfort to participants.

Researchers should also consider the potential impact of their study on vulnerable populations and ensure that their methods are unbiased and free from discrimination.

Ethical guidelines help ensure that research is conducted responsibly and with respect for the well-being of the participants involved.

Can qualitative data have independent and dependent variables?

Yes, both quantitative and qualitative data can have independent and dependent variables.

In quantitative research, independent variables are usually measured numerically and manipulated to understand their impact on the dependent variable. In qualitative research, independent variables can be qualitative in nature, such as individual experiences, cultural factors, or social contexts, influencing the phenomenon of interest.

The dependent variable, in both cases, is what is being observed or studied to see how it changes in response to the independent variable.

So, regardless of the type of data, researchers analyze the relationship between independent and dependent variables to gain insights into their research questions.

Can the same variable be independent in one study and dependent in another?

Yes, the same variable can be independent in one study and dependent in another.

The classification of a variable as independent or dependent depends on how it is used within a specific study. In one study, a variable might be manipulated or controlled to see its effect on another variable, making it independent.

However, in a different study, that same variable might be the one being measured or observed to understand its relationship with another variable, making it dependent.

The role of a variable as independent or dependent can vary depending on the research question and study design.

15 Independent and Dependent Variable Examples

Dave Cornell (PhD)

Dr. Cornell has worked in education for more than 20 years. His work has involved designing teacher certification for Trinity College in London and in-service training for state governments in the United States. He has trained kindergarten teachers in 8 countries and helped businessmen and women open baby centers and kindergartens in 3 countries.

Learn about our Editorial Process

Chris Drew (PhD)

This article was peer-reviewed and edited by Chris Drew (PhD). The review process on Helpful Professor involves having a PhD level expert fact check, edit, and contribute to articles. Reviewers ensure all content reflects expert academic consensus and is backed up with reference to academic studies. Dr. Drew has published over 20 academic articles in scholarly journals. He is the former editor of the Journal of Learning Development in Higher Education and holds a PhD in Education from ACU.

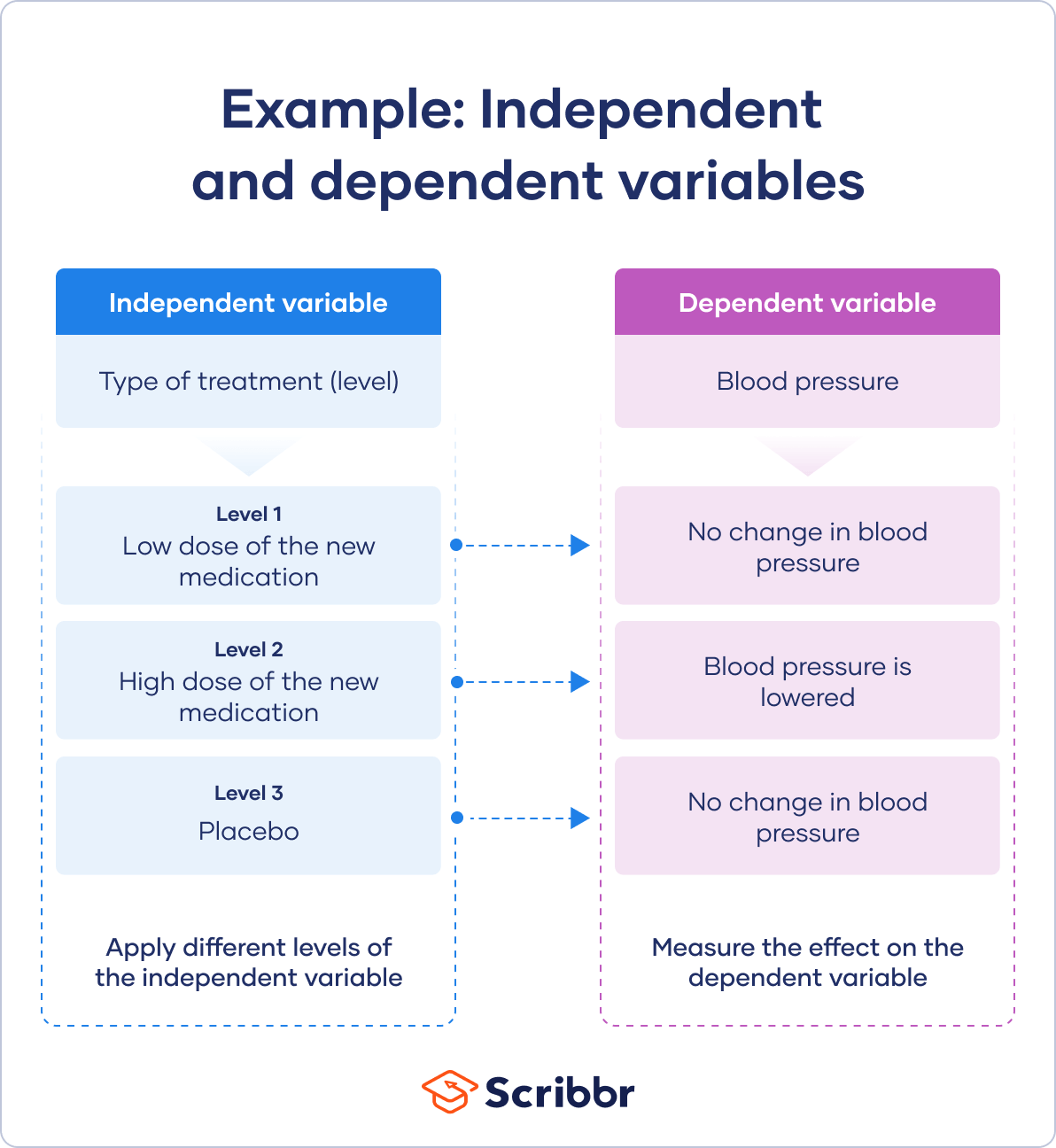

An independent variable (IV) is what is manipulated in a scientific experiment to determine its effect on the dependent variable (DV).

By varying the level of the independent variable and observing associated changes in the dependent variable, a researcher can conclude whether the independent variable affects the dependent variable or not.

This can provide very valuable information when studying just about any subject.

Because the researcher controls the level of the independent variable, it can be determined if the independent variable has a causal effect on the dependent variable.

The term causation is vitally important. Scientists want to know what causes changes in the dependent variable. The only way to do that is to manipulate the independent variable and observe any changes in the dependent variable.

Definition of Independent and Dependent Variables

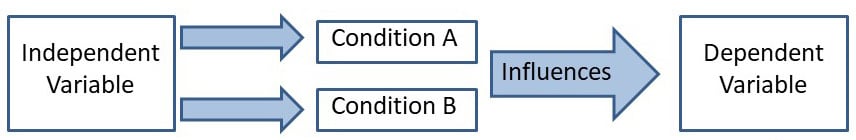

The independent variable and dependent variable are used in a very specific type of scientific study called the experiment .

Although there are many variations of the experiment, generally speaking, it involves either the presence or absence of the independent variable and the observation of what happens to the dependent variable.

The research participants are randomly assigned to either receive the independent variable (called the treatment condition), or not receive the independent variable (called the control condition).

Other variations of an experiment might include having multiple levels of the independent variable.

If the independent variable affects the dependent variable, then it should be possible to observe changes in the dependent variable based on the presence or absence of the independent variable.

Of course, there are a lot of issues to consider when conducting an experiment, but these are the basic principles.

These concepts should not be confused with predictor and outcome variables .

Examples of Independent and Dependent Variables

1. gatorade and improved athletic performance.

A sports medicine researcher has been hired by Gatorade to test the effects of its sports drink on athletic performance. The company wants to claim that when an athlete drinks Gatorade, their performance will improve.

If they can back up that claim with hard scientific data, that would be great for sales.

So, the researcher goes to a nearby university and randomly selects both male and female athletes from several sports: track and field, volleyball, basketball, and football. Each athlete will run on a treadmill for one hour while their heart rate is tracked.

All of the athletes are given the exact same amount of liquid to consume 30-minutes before and during their run. Half are given Gatorade, and the other half are given water, but no one knows what they are given because both liquids have been colored.

In this example, the independent variable is Gatorade, and the dependent variable is heart rate.

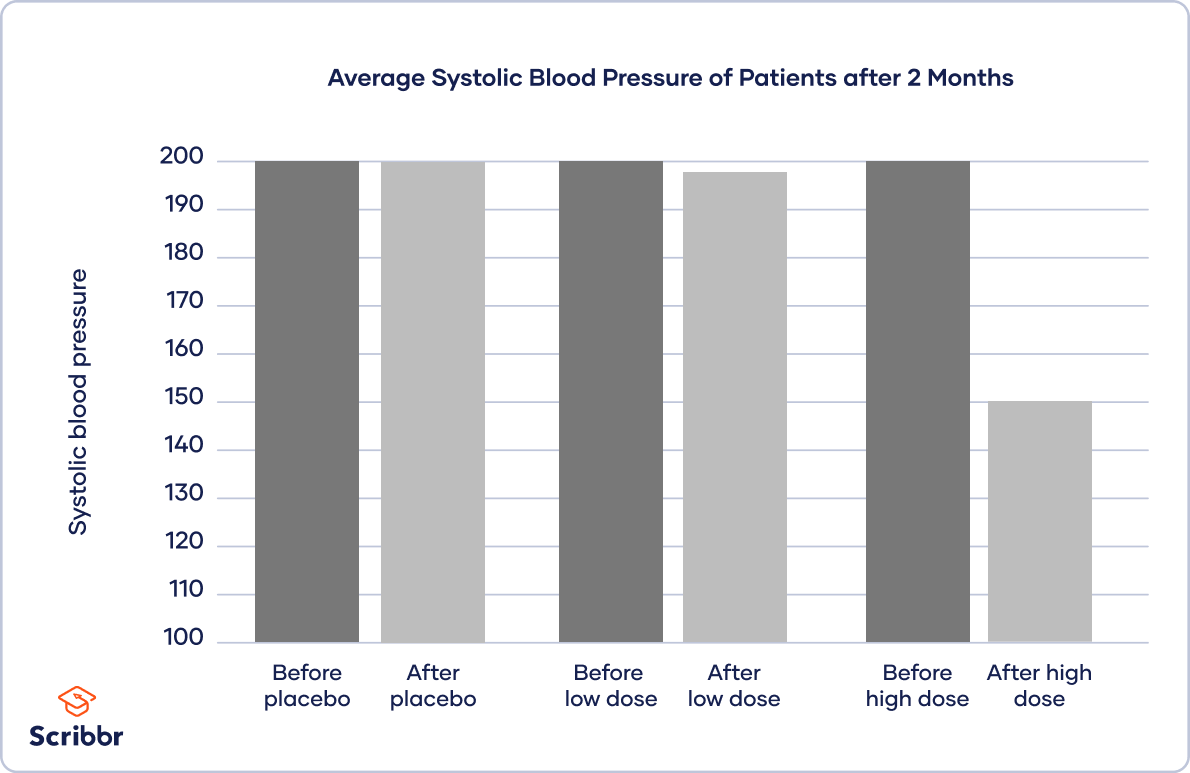

2. Chemotherapy and Cancer

A hospital is investigating the effectiveness of a new type of chemotherapy on cancer. The researchers identified 120 patients with relatively similar types of cancerous tumors in both size and stage of progression.

The patients are randomly assigned to one of three groups: one group receives no chemotherapy, one group receives a low dose of chemotherapy, and one group receives a high dose of chemotherapy.

Each group receives chemotherapy treatment three times a week for two months, except for the no-treatment group. At the end of two months, the doctors measure the size of each patient’s tumor.

In this study, despite the ethical issues (remember this is just a hypothetical example), the independent variable is chemotherapy, and the dependent variable is tumor size.

3. Interior Design Color and Eating Rate

A well-known fast-food corporation wants to know if the color of the interior of their restaurants will affect how fast people eat. Of course, they would prefer that consumers enter and exit quickly to increase sales volume and profit.

So, they rent space in a large shopping mall and create three different simulated restaurant interiors of different colors. One room is painted mostly white with red trim and seats; one room is painted mostly white with blue trim and seats; and one room is painted mostly white with off-white trim and seats.

Next, they randomly select shoppers on Saturdays and Sundays to eat for free in one of the three rooms. Each shopper is given a box of the same food and drink items and sent to one of the rooms. The researchers record how much time elapses from the moment they enter the room to the moment they leave.

The independent variable is the color of the room, and the dependent variable is the amount of time spent in the room eating.

4. Hair Color and Attraction

A large multinational cosmetics company wants to know if the color of a woman’s hair affects the level of perceived attractiveness in males. So, they use Photoshop to manipulate the same image of a female by altering the color of her hair: blonde, brunette, red, and brown.

Next, they randomly select university males to enter their testing facilities. Each participant sits in front of a computer screen and responds to questions on a survey. At the end of the survey, the screen shows one of the photos of the female.

At the same time, software on the computer that utilizes the computer’s camera is measuring each male’s pupil dilation. The researchers believe that larger dilation indicates greater perceived attractiveness.

The independent variable is hair color, and the dependent variable is pupil dilation.

5. Mozart and Math

After many claims that listening to Mozart will make you smarter, a group of education specialists decides to put it to the test. So, first, they go to a nearby school in a middle-class neighborhood.

During the first three months of the academic year, they randomly select some 5th-grade classrooms to listen to Mozart during their lessons and exams. Other 5 th grade classrooms will not listen to any music during their lessons and exams.

The researchers then compare the scores of the exams between the two groups of classrooms.

Although there are a lot of obvious limitations to this hypothetical, it is the first step.

The independent variable is Mozart, and the dependent variable is exam scores.

6. Essential Oils and Sleep

A company that specializes in essential oils wants to examine the effects of lavender on sleep quality. They hire a sleep research lab to conduct the study. The researchers at the lab have their usual test volunteers sleep in individual rooms every night for one week.

The conditions of each room are all exactly the same, except that half of the rooms have lavender released into the rooms and half do not. While the study participants are sleeping, their heart rates and amount of time spent in deep sleep are recorded with high-tech equipment.

At the end of the study, the researchers compare the total amount of time spent in deep sleep of the lavender-room participants with the no lavender-room participants.

The independent variable in this sleep study is lavender, and the dependent variable is the total amount of time spent in deep sleep.

7. Teaching Style and Learning

A group of teachers is interested in which teaching method will work best for developing critical thinking skills.

So, they train a group of teachers in three different teaching styles : teacher-centered, where the teacher tells the students all about critical thinking; student-centered, where the students practice critical thinking and receive teacher feedback; and AI-assisted teaching, where the teacher uses a special software program to teach critical thinking.

At the end of three months, all the students take the same test that assesses critical thinking skills. The teachers then compare the scores of each of the three groups of students.

The independent variable is the teaching method, and the dependent variable is performance on the critical thinking test.

8. Concrete Mix and Bridge Strength

A chemicals company has developed three different versions of their concrete mix. Each version contains a different blend of specially developed chemicals. The company wants to know which version is the strongest.

So, they create three bridge molds that are identical in every way. They fill each mold with one of the different concrete mixtures. Next, they test the strength of each bridge by placing progressively more weight on its center until the bridge collapses.

In this study, the independent variable is the concrete mixture, and the dependent variable is the amount of weight at collapse.

9. Recipe and Consumer Preferences

People in the pizza business know that the crust is key. Many companies, large and small, will keep their recipe a top secret. Before rolling out a new type of crust, the company decides to conduct some research on consumer preferences.

The company has prepared three versions of their crust that vary in crunchiness, they are: a little crunchy, very crunchy, and super crunchy. They already have a pool of consumers that fit their customer profile and they often use them for testing.

Each participant sits in a booth and takes a bite of one version of the crust. They then indicate how much they liked it by pressing one of 5 buttons: didn’t like at all, liked, somewhat liked, liked very much, loved it.

The independent variable is the level of crust crunchiness, and the dependent variable is how much it was liked.

10. Protein Supplements and Muscle Mass

A large food company is considering entering the health and nutrition sector. Their R&D food scientists have developed a protein supplement that is designed to help build muscle mass for people that work out regularly.

The company approaches several gyms near its headquarters. They enlist the cooperation of over 120 gym rats that work out 5 days a week. Their muscle mass is measured, and only those with a lower level are selected for the study, leaving a total of 80 study participants.

They randomly assign half of the participants to take the recommended dosage of their supplement every day for three months after each workout. The other half takes the same amount of something that looks the same but actually does nothing to the body.

At the end of three months, the muscle mass of all participants is measured.

The independent variable is the supplement, and the dependent variable is muscle mass.

11. Air Bags and Skull Fractures

In the early days of airbags , automobile companies conducted a great deal of testing. At first, many people in the industry didn’t think airbags would be effective at all. Fortunately, there was a way to test this theory objectively.

In a representative example: Several crash cars were outfitted with an airbag, and an equal number were not. All crash cars were of the same make, year, and model. Then the crash experts rammed each car into a crash wall at the same speed. Sensors on the crash dummy skulls allowed for a scientific analysis of how much damage a human skull would incur.

The amount of skull damage of dummies in cars with airbags was then compared with those without airbags.

The independent variable was the airbag and the dependent variable was the amount of skull damage.

12. Vitamins and Health

Some people take vitamins every day. A group of health scientists decides to conduct a study to determine if taking vitamins improves health.

They randomly select 1,000 people that are relatively similar in terms of their physical health. The key word here is “similar.”

Because the scientists have an unlimited budget (and because this is a hypothetical example, all of the participants have the same meals delivered to their homes (breakfast, lunch, and dinner), every day for one year.

In addition, the scientists randomly assign half of the participants to take a set of vitamins, supplied by the researchers every day for 1 year. The other half do not take the vitamins.

At the end of one year, the health of all participants is assessed, using blood pressure and cholesterol level as the key measurements.

In this highly unrealistic study, the independent variable is vitamins, and the dependent variable is health, as measured by blood pressure and cholesterol levels.

13. Meditation and Stress

Does practicing meditation reduce stress? If you have ever wondered if this is true or not, then you are in luck because there is a way to know one way or the other.

All we have to do is find 90 people that are similar in age, stress levels, diet and exercise, and as many other factors as we can think of.

Next, we randomly assign each person to either practice meditation every day, three days a week, or not at all. After three months, we measure the stress levels of each person and compare the groups.

How should we measure stress? Well, there are a lot of ways. We could measure blood pressure, or the amount of the stress hormone cortisol in their blood, or by using a paper and pencil measure such as a questionnaire that asks them how much stress they feel.

In this study, the independent variable is meditation and the dependent variable is the amount of stress (however it is measured).

14. Video Games and Aggression

When video games started to become increasingly graphic, it was a huge concern in many countries in the world. Educators, social scientists, and parents were shocked at how graphic games were becoming.

Since then, there have been hundreds of studies conducted by psychologists and other researchers. A lot of those studies used an experimental design that involved males of various ages randomly assigned to play a graphic or non-graphic video game.

Afterward, their level of aggression was measured via a wide range of methods, including direct observations of their behavior, their actions when given the opportunity to be aggressive, or a variety of other measures.

So many studies have used so many different ways of measuring aggression.

In these experimental studies, the independent variable was graphic video games, and the dependent variable was observed level of aggression.

15. Vehicle Exhaust and Cognitive Performance

Car pollution is a concern for a lot of reasons. In addition to being bad for the environment, car exhaust may cause damage to the brain and impair cognitive performance.

One way to examine this possibility would be to conduct an animal study. The research would look something like this: laboratory rats would be raised in three different rooms that varied in the degree of car exhaust circulating in the room: no exhaust, little exhaust, or a lot of exhaust.

After a certain period of time, perhaps several months, the effects on cognitive performance could be measured.

One common way of assessing cognitive performance in laboratory rats is by measuring the amount of time it takes to run a maze successfully. It would also be possible to examine the physical effects of car exhaust on the brain by conducting an autopsy.

In this animal study, the independent variable would be car exhaust and the dependent variable would be amount of time to run a maze.

Read Next: Extraneous Variables Examples

The experiment is an incredibly valuable way to answer scientific questions regarding the cause and effect of certain variables. By manipulating the level of an independent variable and observing corresponding changes in a dependent variable, scientists can gain an understanding of many phenomena.

For example, scientists can learn if graphic video games make people more aggressive, if mediation reduces stress, if Gatorade improves athletic performance, and even if certain medical treatments can cure cancer.

The determination of causality is the key benefit of manipulating the independent variable and them observing changes in the dependent variable. Other research methodologies can reveal factors that are related to the dependent variable or associated with the dependent variable, but only when the independent variable is controlled by the researcher can causality be determined.

Ferguson, C. J. (2010). Blazing Angels or Resident Evil? Can graphic video games be a force for good? Review of General Psychology, 14 (2), 68-81. https://doi.org/10.1037/a0018941

Flannelly, L. T., Flannelly, K. J., & Jankowski, K. R. (2014). Independent, dependent, and other variables in healthcare and chaplaincy research. Journal of Health Care Chaplaincy , 20 (4), 161–170. https://doi.org/10.1080/08854726.2014.959374

Manocha, R., Black, D., Sarris, J., & Stough, C.(2011). A randomized, controlled trial of meditation for work stress, anxiety and depressed mood in full-time workers. Evidence-Based Complementary and Alternative Medicine , vol. 2011, Article ID 960583. https://doi.org/10.1155/2011/960583

Rumrill, P. D., Jr. (2004). Non-manipulation quantitative designs. Work (Reading, Mass.) , 22 (3), 255–260.

Taylor, J. M., & Rowe, B. J. (2012). The “Mozart Effect” and the mathematical connection, Journal of College Reading and Learning, 42 (2), 51-66. https://doi.org/10.1080/10790195.2012.10850354

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 23 Achieved Status Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 25 Defense Mechanisms Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 15 Theory of Planned Behavior Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 18 Adaptive Behavior Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 23 Achieved Status Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 15 Ableism Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 25 Defense Mechanisms Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 15 Theory of Planned Behavior Examples

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

Research Variables 101

Independent variables, dependent variables, control variables and more

By: Derek Jansen (MBA) | Expert Reviewed By: Kerryn Warren (PhD) | January 2023

If you’re new to the world of research, especially scientific research, you’re bound to run into the concept of variables , sooner or later. If you’re feeling a little confused, don’t worry – you’re not the only one! Independent variables, dependent variables, confounding variables – it’s a lot of jargon. In this post, we’ll unpack the terminology surrounding research variables using straightforward language and loads of examples .

Overview: Variables In Research

| 1. ? 2. variables 3. variables 4. variables | 5. variables |

What (exactly) is a variable?

The simplest way to understand a variable is as any characteristic or attribute that can experience change or vary over time or context – hence the name “variable”. For example, the dosage of a particular medicine could be classified as a variable, as the amount can vary (i.e., a higher dose or a lower dose). Similarly, gender, age or ethnicity could be considered demographic variables, because each person varies in these respects.

Within research, especially scientific research, variables form the foundation of studies, as researchers are often interested in how one variable impacts another, and the relationships between different variables. For example:

- How someone’s age impacts their sleep quality

- How different teaching methods impact learning outcomes

- How diet impacts weight (gain or loss)

As you can see, variables are often used to explain relationships between different elements and phenomena. In scientific studies, especially experimental studies, the objective is often to understand the causal relationships between variables. In other words, the role of cause and effect between variables. This is achieved by manipulating certain variables while controlling others – and then observing the outcome. But, we’ll get into that a little later…

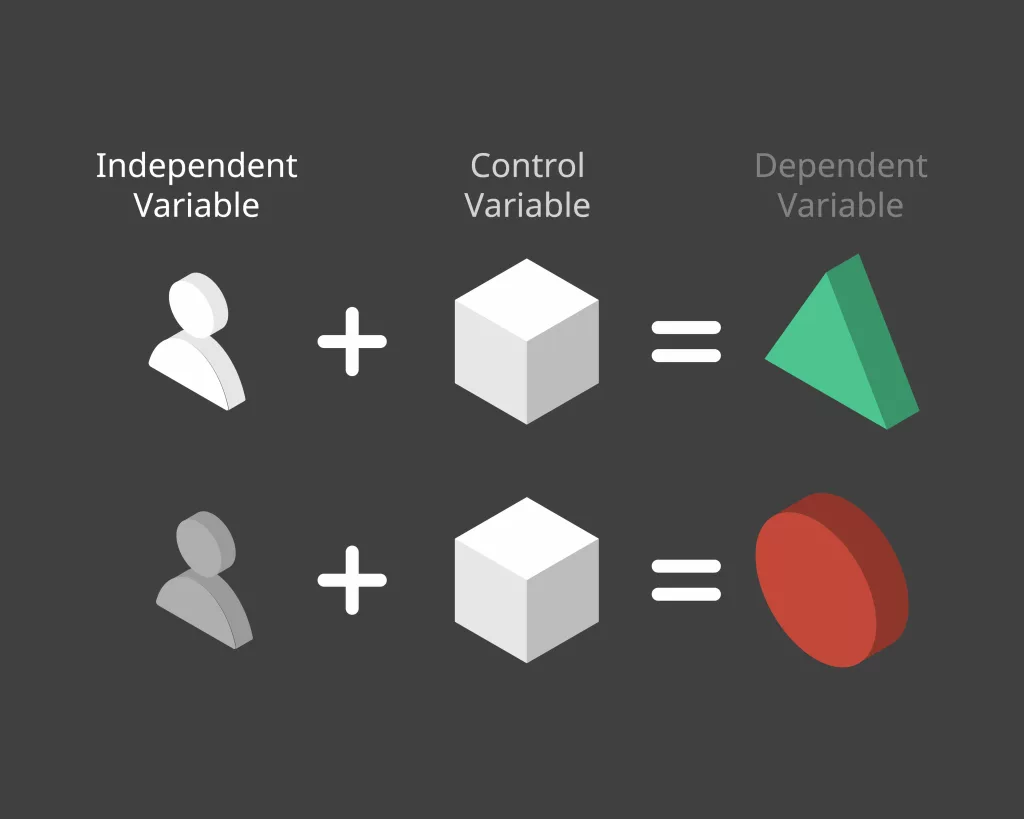

The “Big 3” Variables

Variables can be a little intimidating for new researchers because there are a wide variety of variables, and oftentimes, there are multiple labels for the same thing. To lay a firm foundation, we’ll first look at the three main types of variables, namely:

- Independent variables (IV)

- Dependant variables (DV)

- Control variables

What is an independent variable?

Simply put, the independent variable is the “ cause ” in the relationship between two (or more) variables. In other words, when the independent variable changes, it has an impact on another variable.

For example:

- Increasing the dosage of a medication (Variable A) could result in better (or worse) health outcomes for a patient (Variable B)

- Changing a teaching method (Variable A) could impact the test scores that students earn in a standardised test (Variable B)

- Varying one’s diet (Variable A) could result in weight loss or gain (Variable B).

It’s useful to know that independent variables can go by a few different names, including, explanatory variables (because they explain an event or outcome) and predictor variables (because they predict the value of another variable). Terminology aside though, the most important takeaway is that independent variables are assumed to be the “cause” in any cause-effect relationship. As you can imagine, these types of variables are of major interest to researchers, as many studies seek to understand the causal factors behind a phenomenon.

Need a helping hand?

What is a dependent variable?

While the independent variable is the “ cause ”, the dependent variable is the “ effect ” – or rather, the affected variable . In other words, the dependent variable is the variable that is assumed to change as a result of a change in the independent variable.

Keeping with the previous example, let’s look at some dependent variables in action:

- Health outcomes (DV) could be impacted by dosage changes of a medication (IV)

- Students’ scores (DV) could be impacted by teaching methods (IV)

- Weight gain or loss (DV) could be impacted by diet (IV)

In scientific studies, researchers will typically pay very close attention to the dependent variable (or variables), carefully measuring any changes in response to hypothesised independent variables. This can be tricky in practice, as it’s not always easy to reliably measure specific phenomena or outcomes – or to be certain that the actual cause of the change is in fact the independent variable.

As the adage goes, correlation is not causation . In other words, just because two variables have a relationship doesn’t mean that it’s a causal relationship – they may just happen to vary together. For example, you could find a correlation between the number of people who own a certain brand of car and the number of people who have a certain type of job. Just because the number of people who own that brand of car and the number of people who have that type of job is correlated, it doesn’t mean that owning that brand of car causes someone to have that type of job or vice versa. The correlation could, for example, be caused by another factor such as income level or age group, which would affect both car ownership and job type.

To confidently establish a causal relationship between an independent variable and a dependent variable (i.e., X causes Y), you’ll typically need an experimental design , where you have complete control over the environmen t and the variables of interest. But even so, this doesn’t always translate into the “real world”. Simply put, what happens in the lab sometimes stays in the lab!

As an alternative to pure experimental research, correlational or “ quasi-experimental ” research (where the researcher cannot manipulate or change variables) can be done on a much larger scale more easily, allowing one to understand specific relationships in the real world. These types of studies also assume some causality between independent and dependent variables, but it’s not always clear. So, if you go this route, you need to be cautious in terms of how you describe the impact and causality between variables and be sure to acknowledge any limitations in your own research.

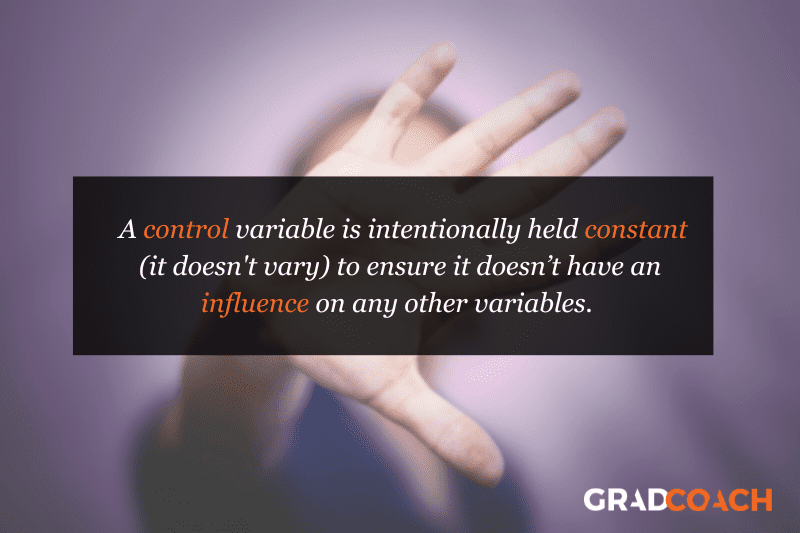

What is a control variable?

In an experimental design, a control variable (or controlled variable) is a variable that is intentionally held constant to ensure it doesn’t have an influence on any other variables. As a result, this variable remains unchanged throughout the course of the study. In other words, it’s a variable that’s not allowed to vary – tough life 🙂

As we mentioned earlier, one of the major challenges in identifying and measuring causal relationships is that it’s difficult to isolate the impact of variables other than the independent variable. Simply put, there’s always a risk that there are factors beyond the ones you’re specifically looking at that might be impacting the results of your study. So, to minimise the risk of this, researchers will attempt (as best possible) to hold other variables constant . These factors are then considered control variables.

Some examples of variables that you may need to control include:

- Temperature

- Time of day

- Noise or distractions

Which specific variables need to be controlled for will vary tremendously depending on the research project at hand, so there’s no generic list of control variables to consult. As a researcher, you’ll need to think carefully about all the factors that could vary within your research context and then consider how you’ll go about controlling them. A good starting point is to look at previous studies similar to yours and pay close attention to which variables they controlled for.

Of course, you won’t always be able to control every possible variable, and so, in many cases, you’ll just have to acknowledge their potential impact and account for them in the conclusions you draw. Every study has its limitations , so don’t get fixated or discouraged by troublesome variables. Nevertheless, always think carefully about the factors beyond what you’re focusing on – don’t make assumptions!

Other types of variables

As we mentioned, independent, dependent and control variables are the most common variables you’ll come across in your research, but they’re certainly not the only ones you need to be aware of. Next, we’ll look at a few “secondary” variables that you need to keep in mind as you design your research.

- Moderating variables

- Mediating variables

- Confounding variables

- Latent variables

Let’s jump into it…

What is a moderating variable?

A moderating variable is a variable that influences the strength or direction of the relationship between an independent variable and a dependent variable. In other words, moderating variables affect how much (or how little) the IV affects the DV, or whether the IV has a positive or negative relationship with the DV (i.e., moves in the same or opposite direction).

For example, in a study about the effects of sleep deprivation on academic performance, gender could be used as a moderating variable to see if there are any differences in how men and women respond to a lack of sleep. In such a case, one may find that gender has an influence on how much students’ scores suffer when they’re deprived of sleep.

It’s important to note that while moderators can have an influence on outcomes , they don’t necessarily cause them ; rather they modify or “moderate” existing relationships between other variables. This means that it’s possible for two different groups with similar characteristics, but different levels of moderation, to experience very different results from the same experiment or study design.

What is a mediating variable?

Mediating variables are often used to explain the relationship between the independent and dependent variable (s). For example, if you were researching the effects of age on job satisfaction, then education level could be considered a mediating variable, as it may explain why older people have higher job satisfaction than younger people – they may have more experience or better qualifications, which lead to greater job satisfaction.

Mediating variables also help researchers understand how different factors interact with each other to influence outcomes. For instance, if you wanted to study the effect of stress on academic performance, then coping strategies might act as a mediating factor by influencing both stress levels and academic performance simultaneously. For example, students who use effective coping strategies might be less stressed but also perform better academically due to their improved mental state.

In addition, mediating variables can provide insight into causal relationships between two variables by helping researchers determine whether changes in one factor directly cause changes in another – or whether there is an indirect relationship between them mediated by some third factor(s). For instance, if you wanted to investigate the impact of parental involvement on student achievement, you would need to consider family dynamics as a potential mediator, since it could influence both parental involvement and student achievement simultaneously.

What is a confounding variable?

A confounding variable (also known as a third variable or lurking variable ) is an extraneous factor that can influence the relationship between two variables being studied. Specifically, for a variable to be considered a confounding variable, it needs to meet two criteria:

- It must be correlated with the independent variable (this can be causal or not)

- It must have a causal impact on the dependent variable (i.e., influence the DV)

Some common examples of confounding variables include demographic factors such as gender, ethnicity, socioeconomic status, age, education level, and health status. In addition to these, there are also environmental factors to consider. For example, air pollution could confound the impact of the variables of interest in a study investigating health outcomes.

Naturally, it’s important to identify as many confounding variables as possible when conducting your research, as they can heavily distort the results and lead you to draw incorrect conclusions . So, always think carefully about what factors may have a confounding effect on your variables of interest and try to manage these as best you can.

What is a latent variable?

Latent variables are unobservable factors that can influence the behaviour of individuals and explain certain outcomes within a study. They’re also known as hidden or underlying variables , and what makes them rather tricky is that they can’t be directly observed or measured . Instead, latent variables must be inferred from other observable data points such as responses to surveys or experiments.

For example, in a study of mental health, the variable “resilience” could be considered a latent variable. It can’t be directly measured , but it can be inferred from measures of mental health symptoms, stress, and coping mechanisms. The same applies to a lot of concepts we encounter every day – for example:

- Emotional intelligence

- Quality of life

- Business confidence

- Ease of use

One way in which we overcome the challenge of measuring the immeasurable is latent variable models (LVMs). An LVM is a type of statistical model that describes a relationship between observed variables and one or more unobserved (latent) variables. These models allow researchers to uncover patterns in their data which may not have been visible before, thanks to their complexity and interrelatedness with other variables. Those patterns can then inform hypotheses about cause-and-effect relationships among those same variables which were previously unknown prior to running the LVM. Powerful stuff, we say!

Let’s recap

In the world of scientific research, there’s no shortage of variable types, some of which have multiple names and some of which overlap with each other. In this post, we’ve covered some of the popular ones, but remember that this is not an exhaustive list .

To recap, we’ve explored:

- Independent variables (the “cause”)

- Dependent variables (the “effect”)

- Control variables (the variable that’s not allowed to vary)

If you’re still feeling a bit lost and need a helping hand with your research project, check out our 1-on-1 coaching service , where we guide you through each step of the research journey. Also, be sure to check out our free dissertation writing course and our collection of free, fully-editable chapter templates .

Psst... there’s more!

This post was based on one of our popular Research Bootcamps . If you're working on a research project, you'll definitely want to check this out ...

Very informative, concise and helpful. Thank you

Helping information.Thanks

practical and well-demonstrated

Very helpful and insightful

Submit a Comment Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

- Print Friendly

- Science Notes Posts

- Contact Science Notes

- Todd Helmenstine Biography

- Anne Helmenstine Biography

- Free Printable Periodic Tables (PDF and PNG)

- Periodic Table Wallpapers

- Interactive Periodic Table

- Periodic Table Posters

- Science Experiments for Kids

- How to Grow Crystals

- Chemistry Projects

- Fire and Flames Projects

- Holiday Science

- Chemistry Problems With Answers

- Physics Problems

- Unit Conversion Example Problems

- Chemistry Worksheets

- Biology Worksheets

- Periodic Table Worksheets

- Physical Science Worksheets

- Science Lab Worksheets

- My Amazon Books

Independent and Dependent Variables Examples

The independent and dependent variables are key to any scientific experiment, but how do you tell them apart? Here are the definitions of independent and dependent variables, examples of each type, and tips for telling them apart and graphing them.

Independent Variable

The independent variable is the factor the researcher changes or controls in an experiment. It is called independent because it does not depend on any other variable. The independent variable may be called the “controlled variable” because it is the one that is changed or controlled. This is different from the “ control variable ,” which is variable that is held constant so it won’t influence the outcome of the experiment.

Dependent Variable

The dependent variable is the factor that changes in response to the independent variable. It is the variable that you measure in an experiment. The dependent variable may be called the “responding variable.”

Examples of Independent and Dependent Variables

Here are several examples of independent and dependent variables in experiments:

- In a study to determine whether how long a student sleeps affects test scores, the independent variable is the length of time spent sleeping while the dependent variable is the test score.

- You want to know which brand of fertilizer is best for your plants. The brand of fertilizer is the independent variable. The health of the plants (height, amount and size of flowers and fruit, color) is the dependent variable.

- You want to compare brands of paper towels, to see which holds the most liquid. The independent variable is the brand of paper towel. The dependent variable is the volume of liquid absorbed by the paper towel.

- You suspect the amount of television a person watches is related to their age. Age is the independent variable. How many minutes or hours of television a person watches is the dependent variable.

- You think rising sea temperatures might affect the amount of algae in the water. The water temperature is the independent variable. The mass of algae is the dependent variable.

- In an experiment to determine how far people can see into the infrared part of the spectrum, the wavelength of light is the independent variable and whether the light is observed is the dependent variable.

- If you want to know whether caffeine affects your appetite, the presence/absence or amount of caffeine is the independent variable. Appetite is the dependent variable.

- You want to know which brand of microwave popcorn pops the best. The brand of popcorn is the independent variable. The number of popped kernels is the dependent variable. Of course, you could also measure the number of unpopped kernels instead.

- You want to determine whether a chemical is essential for rat nutrition, so you design an experiment. The presence/absence of the chemical is the independent variable. The health of the rat (whether it lives and reproduces) is the dependent variable. A follow-up experiment might determine how much of the chemical is needed. Here, the amount of chemical is the independent variable and the rat health is the dependent variable.

How to Tell the Independent and Dependent Variable Apart

If you’re having trouble identifying the independent and dependent variable, here are a few ways to tell them apart. First, remember the dependent variable depends on the independent variable. It helps to write out the variables as an if-then or cause-and-effect sentence that shows the independent variable causes an effect on the dependent variable. If you mix up the variables, the sentence won’t make sense. Example : The amount of eat (independent variable) affects how much you weigh (dependent variable).

This makes sense, but if you write the sentence the other way, you can tell it’s incorrect: Example : How much you weigh affects how much you eat. (Well, it could make sense, but you can see it’s an entirely different experiment.) If-then statements also work: Example : If you change the color of light (independent variable), then it affects plant growth (dependent variable). Switching the variables makes no sense: Example : If plant growth rate changes, then it affects the color of light. Sometimes you don’t control either variable, like when you gather data to see if there is a relationship between two factors. This can make identifying the variables a bit trickier, but establishing a logical cause and effect relationship helps: Example : If you increase age (independent variable), then average salary increases (dependent variable). If you switch them, the statement doesn’t make sense: Example : If you increase salary, then age increases.

How to Graph Independent and Dependent Variables

Plot or graph independent and dependent variables using the standard method. The independent variable is the x-axis, while the dependent variable is the y-axis. Remember the acronym DRY MIX to keep the variables straight: D = Dependent variable R = Responding variable/ Y = Graph on the y-axis or vertical axis M = Manipulated variable I = Independent variable X = Graph on the x-axis or horizontal axis

- Babbie, Earl R. (2009). The Practice of Social Research (12th ed.) Wadsworth Publishing. ISBN 0-495-59841-0.

- di Francia, G. Toraldo (1981). The Investigation of the Physical World . Cambridge University Press. ISBN 978-0-521-29925-1.

- Gauch, Hugh G. Jr. (2003). Scientific Method in Practice . Cambridge University Press. ISBN 978-0-521-01708-4.

- Popper, Karl R. (2003). Conjectures and Refutations: The Growth of Scientific Knowledge . Routledge. ISBN 0-415-28594-1.

Related Posts

Educational resources and simple solutions for your research journey

Independent vs Dependent Variables: Definitions & Examples

A variable is an important element of research. It is a characteristic, number, or quantity of any category that can be measured or counted and whose value may change with time or other parameters.

Variables are defined in different ways in different fields. For instance, in mathematics, a variable is an alphabetic character that expresses a numerical value. In algebra, a variable represents an unknown entity, mostly denoted by a, b, c, x, y, z, etc. In statistics, variables represent real-world conditions or factors. Despite the differences in definitions, in all fields, variables represent the entity that changes and help us understand how one factor may or may not influence another factor.

Variables in research and statistics are of different types—independent, dependent, quantitative (discrete or continuous), qualitative (nominal/categorical, ordinal), intervening, moderating, extraneous, confounding, control, and composite. In this article we compare the first two types— independent vs dependent variables .

Table of Contents

What is a variable?

Researchers conduct experiments to understand the cause-and-effect relationships between various entities. In such experiments, the entities whose values change are called variables. These variables describe the relationships among various factors and help in drawing conclusions in experiments. They help in understanding how some factors influence others. Some examples of variables include age, gender, race, income, weight, etc.

As mentioned earlier, different types of variables are used in research. Of these, we will compare the most common types— independent vs dependent variables . The independent variable is the cause and the dependent variable is the effect, that is, independent variables influence dependent variables. In research, a dependent variable is the outcome of interest of the study and the independent variable is the factor that may influence the outcome. Let’s explain this with an independent and dependent variable example : In a study to analyze the effect of antibiotic use on microbial resistance, antibiotic use is the independent variable and microbial resistance is the dependent variable because antibiotic use affects microbial resistance.( 1)

What is an independent variable?

Here is a list of the important characteristics of independent variables .( 2,3)

- An independent variable is the factor that is being manipulated in an experiment.

- In a research study, independent variables affect or influence dependent variables and cause them to change.

- Independent variables help gather evidence and draw conclusions about the research subject.

- They’re also called predictors, factors, treatment variables, explanatory variables, and input variables.

- On graphs, independent variables are usually placed on the X-axis.

- Example: In a study on the relationship between screen time and sleep problems, screen time is the independent variable because it influences sleep (the dependent variable).

- In addition, some factors like age are independent variables because other variables such as a person’s income will not change their age.

Types of independent variables

Independent variables in research are of the following two types:( 4)

Quantitative

Quantitative independent variables differ in amounts or scales. They are numeric and answer questions like “how many” or “how often.”

Here are a few quantitative independent variables examples :

- Differences in treatment dosages and frequencies: Useful in determining the appropriate dosage to get the desired outcome.

- Varying salinities: Useful in determining the range of salinity that organisms can tolerate.

Qualitative

Qualitative independent variables are non-numerical variables.

A few qualitative independent variables examples are listed below:

- Different strains of a species: Useful in identifying the strain of a crop that is most resistant to a specific disease.

- Varying methods of how a treatment is administered—oral or intravenous.

A quantitative variable is represented by actual amounts and a qualitative variable by categories or groups.

What is a dependent variable ?

Here are a few characteristics of dependent variables: ( 3)

- A dependent variable represents a quantity whose value depends on the independent variable and how it is changed.

- The dependent variable is influenced by the independent variable under various circumstances.

- It is also known as the response variable and outcome variable.

- On graphs, dependent variables are placed on the Y-axis.

Here are a few dependent variable examples :

- In a study on the effect of exercise on mood, the dependent variable is mood because it may change with exercise.

- In a study on the effect of pH on enzyme activity, the enzyme activity is the dependent variable because it changes with changing pH.

Types of dependent variables

Dependent variables are of two types:( 5)

Continuous dependent variables

These variables can take on any value within a given range and are measured on a continuous scale, for example, weight, height, temperature, time, distance, etc.

Categorical or discrete dependent variables

These variables are divided into distinct categories. They are not measured on a continuous scale so only a limited number of values are possible, for example, gender, race, etc.

Differences between independent and dependent variables

The following table compares independent vs dependent variables .

| How to identify | Manipulated or controlled | Observed or measured |

| Purpose | Cause or predictor variable | Outcome or response variable |

| Relationship | Independent of other variables | Influenced by the independent variable |

| Control | Manipulated or assigned by researcher | Measured or observed during experiments |

Independent and dependent variable examples

Listed below are a few examples of research questions from various disciplines and their corresponding independent and dependent variables.( 6)

| Genetics | What is the relationship between genetics and susceptibility to diseases? | genetic factors | susceptibility to diseases |

| History | How do historical events influence national identity? | historical events | national identity |

| Political science | What is the effect of political campaign advertisements on voter behavior? | political campaign advertisements | voter behavior |

| Sociology | How does social media influence cultural awareness? | social media exposure | cultural awareness |

| Economics | What is the impact of economic policies on unemployment rates? | economic policies | unemployment rates |

| Literature | How does literary criticism affect book sales? | literary criticism | book sales |

| Geology | How do a region’s geological features influence the magnitude of earthquakes? | geological features | earthquake magnitudes |

| Environment | How do changes in climate affect wildlife migration patterns? | climate changes | wildlife migration patterns |

| Gender studies | What is the effect of gender bias in the workplace on job satisfaction? | gender bias | job satisfaction |

| Film studies | What is the relationship between cinematographic techniques and viewer engagement? | cinematographic techniques | viewer engagement |

| Archaeology | How does archaeological tourism affect local communities? | archaeological techniques | local community development |

Independent vs dependent variables in research

Experiments usually have at least two variables—independent and dependent. The independent variable is the entity that is being tested and the dependent variable is the result. Classifying independent and dependent variables as discrete and continuous can help in determining the type of analysis that is appropriate in any given research experiment, as shown in the table below. ( 7)

| Chi-Square | t-test | ||

| Logistic regression | ANOVA | ||

| Phi | Regression | ||

| Cramer’s V | Point-biserial correlation | ||

| Logistic regression | Regression | ||

| Point-biserial correlation | Correlation | ||

Here are some more research questions and their corresponding independent and dependent variables. ( 6)

| What is the impact of online learning platforms on academic performance? | type of learning | academic performance |

| What is the association between exercise frequency and mental health? | exercise frequency | mental health |

| How does smartphone use affect productivity? | smartphone use | productivity levels |

| Does family structure influence adolescent behavior? | family structure | adolescent behavior |

| What is the impact of nonverbal communication on job interviews? | nonverbal communication | job interviews |

How to identify independent vs dependent variables

In addition to all the characteristics of independent and dependent variables listed previously, here are few simple steps to identify the variable types in a research question.( 8)

- Keep in mind that there are no specific words that will always describe dependent and independent variables.

- If you’re given a paragraph, convert that into a question and identify specific words describing cause and effect.

- The word representing the cause is the independent variable and that describing the effect is the dependent variable.

Let’s try out these steps with an example.

A researcher wants to conduct a study to see if his new weight loss medication performs better than two bestseller alternatives. He wants to randomly select 20 subjects from Richmond, Virginia, aged 20 to 30 years and weighing above 60 pounds. Each subject will be randomly assigned to three treatment groups.

To identify the independent and dependent variables, we convert this paragraph into a question, as follows: Does the new medication perform better than the alternatives? Here, the medications are the independent variable and their performances or effect on the individuals are the dependent variable.

Visualizing independent vs dependent variables

Data visualization is the graphical representation of information by using charts, graphs, and maps. Visualizations help in making data more understandable by making it easier to compare elements, identify trends and relationships (among variables), among other functions.

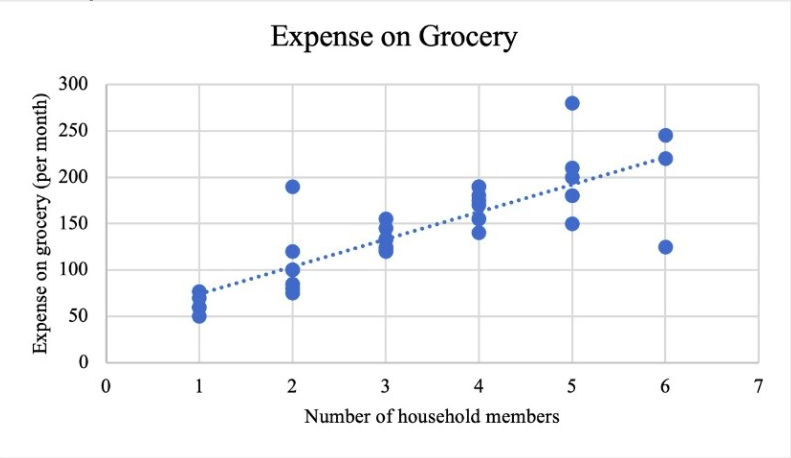

Bar graphs, pie charts, and scatter plots are the best methods to graphically represent variables. While pie charts and bar graphs are suitable for depicting categorical data, scatter plots are appropriate for quantitative data. The independent variable is usually placed on the X-axis and the dependent variable on the Y-axis.

Figure 1 is a scatter plot that depicts the relationship between the number of household members and their monthly grocery expenses. 9 The number of household members is the independent variable and the expenses the dependent variable. The graph shows that as the number of members increases the expenditure also increases.

Key takeaways

Let’s summarize the key takeaways about independent vs dependent variables from this article:

- A variable is any entity being measured in a study.

- A dependent variable is often the focus of a research study and is the response or outcome. It depends on or varies with changes in other variables.

- Independent variables cause changes in dependent variables and don’t depend on other variables.

- An independent variable can influence a dependent variable, but a dependent variable cannot influence an independent variable.

- An independent variable is the cause and dependent variable is the effect.

Frequently asked questions

- What are the different types of variables used in research?

The following table lists the different types of variables used in research.( 10)

| Categorical | Measures a construct that has different categories | gender, race, religious affiliation, political affiliation |

| Quantitative | Measures constructs that vary by degree of the amount | weight, height, age, intelligence scores |

| Independent (IV) | Measures constructs considered to be the cause | Higher education (IV) leads to higher income (DV) |

| Dependent (DV) | Measures constructs that are considered the effect | Exercise (IV) will reduce anxiety levels (DV) |

| Intervening or mediating (MV) | Measures constructs that intervene or stand in between the cause and effect | Incarcerated individuals are more likely to have psychiatric disorder (MV), which leads to disability in social roles |

| Confounding (CV) | “Rival explanations” that explain the cause-and-effect relationship | Age (CV) explains the relationship between increased shoe size and increase in intelligence in children |

| Control variable | Extraneous variables whose influence can be controlled or eliminated | Demographic data such as gender, socioeconomic status, age |

2. Why is it important to differentiate between independent vs dependent variables ?

Differentiating between independent vs dependent variables is important to ensure the correct application in your own research and also the correct understanding of other studies. An incorrectly framed research question can lead to confusion and inaccurate results. An easy way to differentiate is to identify the cause and effect.

3. How are independent and dependent variables used in non-experimental research?

So far in this article we talked about variables in relation to experimental research, wherein variables are manipulated or measured to test a hypothesis, that is, to observe the effect on dependent variables. Let’s examine non-experimental research and how variable are used. 11 In non-experimental research, variables are not manipulated but are observed in their natural state. Researchers do not have control over the variables and cannot manipulate them based on their research requirements. For example, a study examining the relationship between income and education level would not manipulate either variable. Instead, the researcher would observe and measure the levels of each variable in the sample population. The level of control researchers have is the major difference between experimental and non-experimental research. Another difference is the causal relationship between the variables. In non-experimental research, it is not possible to establish a causal relationship because other variables may be influencing the outcome.

4. Are there any advantages and disadvantages of using independent vs dependent variables ?

Here are a few advantages and disadvantages of both independent and dependent variables.( 12)

Advantages:

- Dependent variables are not liable to any form of bias because they cannot be manipulated by researchers or other external factors.

- Independent variables are easily obtainable and don’t require complex mathematical procedures to be observed, like dependent variables. This is because researchers can easily manipulate these variables or collect the data from respondents.

- Some independent variables are natural factors and cannot be manipulated. They are also easily obtainable because less time is required for data collection.

Disadvantages:

- Obtaining dependent variables is a very expensive and effort- and time-intensive process because these variables are obtained from longitudinal research by solving complex equations.

- Independent variables are prone to researcher and respondent bias because they can be manipulated, and this may affect the study results.

We hope this article has provided you with an insight into the use and importance of independent vs dependent variables , which can help you effectively use variables in your next research study.

- Kaliyadan F, Kulkarni V. Types of variables, descriptive statistics, and sample size. Indian Dermatol Online J. 2019 Jan-Feb; 10(1): 82–86. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6362742/

- What Is an independent variable? (with uses and examples). Indeed website. Accessed March 11, 2024. https://www.indeed.com/career-advice/career-development/what-is-independent-variable

- Independent and dependent variables: Differences & examples. Statistics by Jim website. Accessed March 10, 2024. https://statisticsbyjim.com/regression/independent-dependent-variables/

- Independent variable. Biology online website. Accessed March 9, 2024. https://www.biologyonline.com/dictionary/independent-variable#:~:text=The%20independent%20variable%20in%20research,how%20many%20or%20how%20often .

- Dependent variables: Definition and examples. Clubz Tutoring Services website. Accessed March 10, 2024. https://clubztutoring.com/ed-resources/math/dependent-variable-definitions-examples-6-7-2/

- Research topics with independent and dependent variables. Good research topics website. Accessed March 12, 2024. https://goodresearchtopics.com/research-topics-with-independent-and-dependent-variables/

- Levels of measurement and using the correct statistical test. Univariate quantitative methods. Accessed March 14, 2024. https://web.pdx.edu/~newsomj/uvclass/ho_levels.pdf

- Easiest way to identify dependent and independent variables. Afidated website. Accessed March 15, 2024. https://www.afidated.com/2014/07/how-to-identify-dependent-and.html

- Choosing data visualizations. Math for the people website. Accessed March 14, 2024. https://web.stevenson.edu/mbranson/m4tp/version1/environmental-racism-choosing-data-visualization.html

- Trivedi C. Types of variables in scientific research. Concepts Hacked website. Accessed March 15, 2024. https://conceptshacked.com/variables-in-scientific-research/

- Variables in experimental and non-experimental research. Statistics solutions website. Accessed March 14, 2024. https://www.statisticssolutions.com/variables-in-experimental-and-non-experimental-research/#:~:text=The%20independent%20variable%20would%20be,state%20instead%20of%20manipulating%20them .

- Dependent vs independent variables: 11 key differences. Formplus website. Accessed March 15, 2024. https://www.formpl.us/blog/dependent-independent-variables

Editage All Access is a subscription-based platform that unifies the best AI tools and services designed to speed up, simplify, and streamline every step of a researcher’s journey. The Editage All Access Pack is a one-of-a-kind subscription that unlocks full access to an AI writing assistant, literature recommender, journal finder, scientific illustration tool, and exclusive discounts on professional publication services from Editage.

Based on 22+ years of experience in academia, Editage All Access empowers researchers to put their best research forward and move closer to success. Explore our top AI Tools pack, AI Tools + Publication Services pack, or Build Your Own Plan. Find everything a researcher needs to succeed, all in one place – Get All Access now starting at just $14 a month !

Related Posts

What are the Best Research Funding Sources

What are Experimental Groups in Research

- Skip to secondary menu

- Skip to main content

- Skip to primary sidebar

Statistics By Jim

Making statistics intuitive

Independent and Dependent Variables: Differences & Examples

By Jim Frost 15 Comments

In this post, learn the definitions of independent and dependent variables, how to identify each type, how they differ between different types of studies, and see examples of them in use.

What is an Independent Variable?

Independent variables (IVs) are the ones that you include in the model to explain or predict changes in the dependent variable. The name helps you understand their role in statistical analysis. These variables are independent . In this context, independent indicates that they stand alone and other variables in the model do not influence them. The researchers are not seeking to understand what causes the independent variables to change.

Independent variables are also known as predictors, factors , treatment variables, explanatory variables, input variables, x-variables, and right-hand variables—because they appear on the right side of the equals sign in a regression equation. In notation, statisticians commonly denote them using Xs. On graphs, analysts place independent variables on the horizontal, or X, axis.

In machine learning, independent variables are known as features.

For example, in a plant growth study, the independent variables might be soil moisture (continuous) and type of fertilizer (categorical).

Statistical models will estimate effect sizes for the independent variables.

Relate post : Effect Sizes in Statistics

Including independent variables in studies

The nature of independent variables changes based on the type of experiment or study:

Controlled experiments : Researchers systematically control and set the values of the independent variables. In randomized experiments, relationships between independent and dependent variables tend to be causal. The independent variables cause changes in the dependent variable.

Observational studies : Researchers do not set the values of the explanatory variables but instead observe them in their natural environment. When the independent and dependent variables are correlated, those relationships might not be causal.

When you include one independent variable in a regression model, you are performing simple regression. For more than one independent variable, it is multiple regression. Despite the different names, it’s really the same analysis with the same interpretations and assumptions.

Determining which IVs to include in a statistical model is known as model specification. That process involves in-depth research and many subject-area, theoretical, and statistical considerations. At its most basic level, you’ll want to include the predictors you are specifically assessing in your study and confounding variables that will bias your results if you don’t add them—particularly for observational studies.

For more information about choosing independent variables, read my post about Specifying the Correct Regression Model .

Related posts : Randomized Experiments , Observational Studies , Covariates , and Confounding Variables

What is a Dependent Variable?

The dependent variable (DV) is what you want to use the model to explain or predict. The values of this variable depend on other variables. It is the outcome that you’re studying. It’s also known as the response variable, outcome variable, and left-hand variable. Statisticians commonly denote them using a Y. Traditionally, graphs place dependent variables on the vertical, or Y, axis.

For example, in the plant growth study example, a measure of plant growth is the dependent variable. That is the outcome of the experiment, and we want to determine what affects it.

How to Identify Independent and Dependent Variables

If you’re reading a study’s write-up, how do you distinguish independent variables from dependent variables? Here are some tips!

Identifying IVs

How statisticians discuss independent variables changes depending on the field of study and type of experiment.

In randomized experiments, look for the following descriptions to identify the independent variables:

- Independent variables cause changes in another variable.

- The researchers control the values of the independent variables. They are controlled or manipulated variables.

- Experiments often refer to them as factors or experimental factors. In areas such as medicine, they might be risk factors.

- Treatment and control groups are always independent variables. In this case, the independent variable is a categorical grouping variable that defines the experimental groups to which participants belong. Each group is a level of that variable.

In observational studies, independent variables are a bit different. While the researchers likely want to establish causation, that’s harder to do with this type of study, so they often won’t use the word “cause.” They also don’t set the values of the predictors. Some independent variables are the experiment’s focus, while others help keep the experimental results valid.

Here’s how to recognize independent variables in observational studies:

- IVs explain the variability, predict, or correlate with changes in the dependent variable.

- Researchers in observational studies must include confounding variables (i.e., confounders) to keep the statistical results valid even if they are not the primary interest of the study. For example, these might include the participants’ socio-economic status or other background information that the researchers aren’t focused on but can explain some of the dependent variable’s variability.

- The results are adjusted or controlled for by a variable.

Regardless of the study type, if you see an estimated effect size, it is an independent variable.

Identifying DVs

Dependent variables are the outcome. The IVs explain the variability or causes changes in the DV. Focus on the “depends” aspect. The value of the dependent variable depends on the IVs. If Y depends on X, then Y is the dependent variable. This aspect applies to both randomized experiments and observational studies.

In an observational study about the effects of smoking, the researchers observe the subjects’ smoking status (smoker/non-smoker) and their lung cancer rates. It’s an observational study because they cannot randomly assign subjects to either the smoking or non-smoking group. In this study, the researchers want to know whether lung cancer rates depend on smoking status. Therefore, the lung cancer rate is the dependent variable.

In a randomized COVID-19 vaccine experiment , the researchers randomly assign subjects to the treatment or control group. They want to determine whether COVID-19 infection rates depend on vaccination status. Hence, the infection rate is the DV.

Note that a variable can be an independent variable in one study but a dependent variable in another. It depends on the context.

For example, one study might assess how the amount of exercise (IV) affects health (DV). However, another study might study the factors (IVs) that influence how much someone exercises (DV). The amount of exercise is an independent variable in one study but a dependent variable in the other!

How Analyses Use IVs and DVs

Regression analysis and ANOVA mathematically describe the relationships between each independent variable and the dependent variable. Typically, you want to determine how changes in one or more predictors associate with changes in the dependent variable. These analyses estimate an effect size for each independent variable.

Suppose researchers study the relationship between wattage, several types of filaments, and the output from a light bulb. In this study, light output is the dependent variable because it depends on the other two variables. Wattage (continuous) and filament type (categorical) are the independent variables.

After performing the regression analysis, the researchers will understand the nature of the relationship between these variables. How much does the light output increase on average for each additional watt? Does the mean light output differ by filament types? They will also learn whether these effects are statistically significant.

Related post : When to Use Regression Analysis

Graphing Independent and Dependent Variables