- Call for Articles

- Login

Experimental Research Design — 6 mistakes you should never make!

Since school days’ students perform scientific experiments that provide results that define and prove the laws and theorems in science. These experiments are laid on a strong foundation of experimental research designs.

An experimental research design helps researchers execute their research objectives with more clarity and transparency.

In this article, we will not only discuss the key aspects of experimental research designs but also the issues to avoid and problems to resolve while designing your research study.

Table of Contents

What Is Experimental Research Design?

Experimental research design is a framework of protocols and procedures created to conduct experimental research with a scientific approach using two sets of variables. Herein, the first set of variables acts as a constant, used to measure the differences of the second set. The best example of experimental research methods is quantitative research .

Experimental research helps a researcher gather the necessary data for making better research decisions and determining the facts of a research study.

When Can a Researcher Conduct Experimental Research?

A researcher can conduct experimental research in the following situations —

- When time is an important factor in establishing a relationship between the cause and effect.

- When there is an invariable or never-changing behavior between the cause and effect.

- Finally, when the researcher wishes to understand the importance of the cause and effect.

Importance of Experimental Research Design

To publish significant results, choosing a quality research design forms the foundation to build the research study. Moreover, effective research design helps establish quality decision-making procedures, structures the research to lead to easier data analysis, and addresses the main research question. Therefore, it is essential to cater undivided attention and time to create an experimental research design before beginning the practical experiment.

By creating a research design, a researcher is also giving oneself time to organize the research, set up relevant boundaries for the study, and increase the reliability of the results. Through all these efforts, one could also avoid inconclusive results. If any part of the research design is flawed, it will reflect on the quality of the results derived.

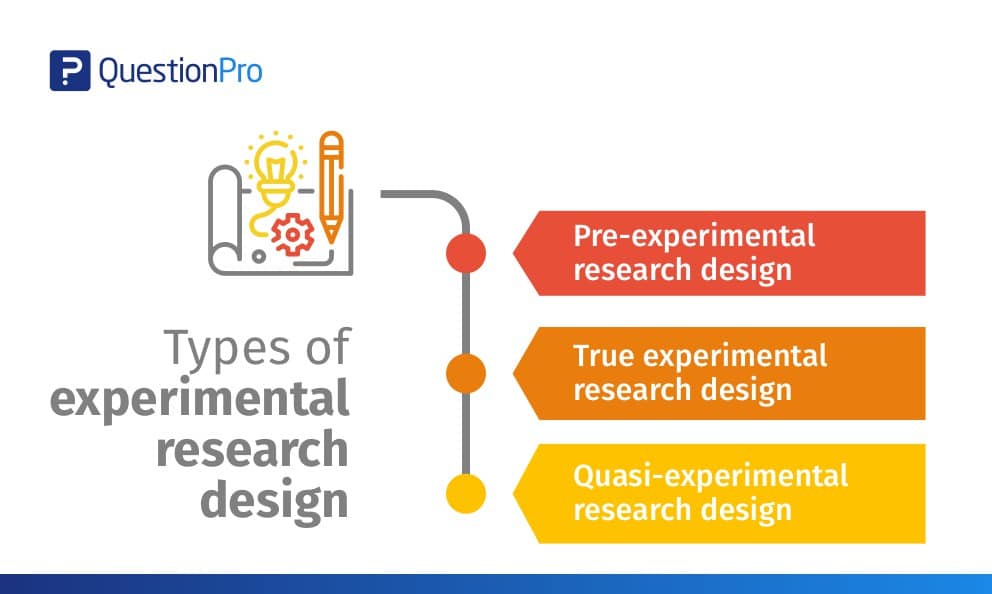

Types of Experimental Research Designs

Based on the methods used to collect data in experimental studies, the experimental research designs are of three primary types:

1. Pre-experimental Research Design

A research study could conduct pre-experimental research design when a group or many groups are under observation after implementing factors of cause and effect of the research. The pre-experimental design will help researchers understand whether further investigation is necessary for the groups under observation.

Pre-experimental research is of three types —

- One-shot Case Study Research Design

- One-group Pretest-posttest Research Design

- Static-group Comparison

2. True Experimental Research Design

A true experimental research design relies on statistical analysis to prove or disprove a researcher’s hypothesis. It is one of the most accurate forms of research because it provides specific scientific evidence. Furthermore, out of all the types of experimental designs, only a true experimental design can establish a cause-effect relationship within a group. However, in a true experiment, a researcher must satisfy these three factors —

- There is a control group that is not subjected to changes and an experimental group that will experience the changed variables

- A variable that can be manipulated by the researcher

- Random distribution of the variables

This type of experimental research is commonly observed in the physical sciences.

3. Quasi-experimental Research Design

The word “Quasi” means similarity. A quasi-experimental design is similar to a true experimental design. However, the difference between the two is the assignment of the control group. In this research design, an independent variable is manipulated, but the participants of a group are not randomly assigned. This type of research design is used in field settings where random assignment is either irrelevant or not required.

The classification of the research subjects, conditions, or groups determines the type of research design to be used.

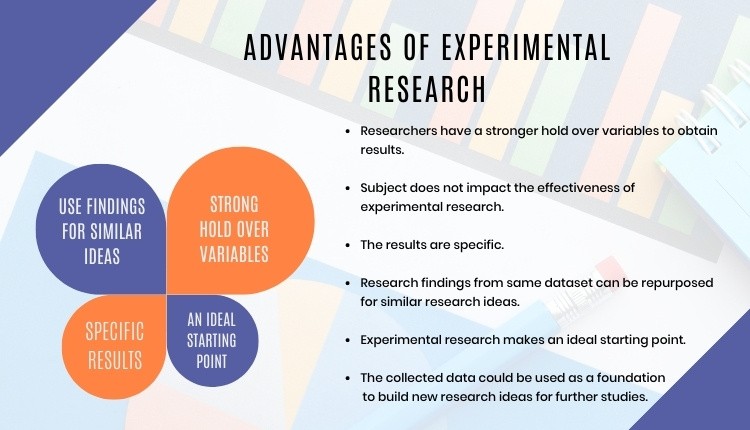

Advantages of Experimental Research

Experimental research allows you to test your idea in a controlled environment before taking the research to clinical trials. Moreover, it provides the best method to test your theory because of the following advantages:

- Researchers have firm control over variables to obtain results.

- The subject does not impact the effectiveness of experimental research. Anyone can implement it for research purposes.

- The results are specific.

- Post results analysis, research findings from the same dataset can be repurposed for similar research ideas.

- Researchers can identify the cause and effect of the hypothesis and further analyze this relationship to determine in-depth ideas.

- Experimental research makes an ideal starting point. The collected data could be used as a foundation to build new research ideas for further studies.

6 Mistakes to Avoid While Designing Your Research

There is no order to this list, and any one of these issues can seriously compromise the quality of your research. You could refer to the list as a checklist of what to avoid while designing your research.

1. Invalid Theoretical Framework

Usually, researchers miss out on checking if their hypothesis is logical to be tested. If your research design does not have basic assumptions or postulates, then it is fundamentally flawed and you need to rework on your research framework.

2. Inadequate Literature Study

Without a comprehensive research literature review , it is difficult to identify and fill the knowledge and information gaps. Furthermore, you need to clearly state how your research will contribute to the research field, either by adding value to the pertinent literature or challenging previous findings and assumptions.

3. Insufficient or Incorrect Statistical Analysis

Statistical results are one of the most trusted scientific evidence. The ultimate goal of a research experiment is to gain valid and sustainable evidence. Therefore, incorrect statistical analysis could affect the quality of any quantitative research.

4. Undefined Research Problem

This is one of the most basic aspects of research design. The research problem statement must be clear and to do that, you must set the framework for the development of research questions that address the core problems.

5. Research Limitations

Every study has some type of limitations . You should anticipate and incorporate those limitations into your conclusion, as well as the basic research design. Include a statement in your manuscript about any perceived limitations, and how you considered them while designing your experiment and drawing the conclusion.

6. Ethical Implications

The most important yet less talked about topic is the ethical issue. Your research design must include ways to minimize any risk for your participants and also address the research problem or question at hand. If you cannot manage the ethical norms along with your research study, your research objectives and validity could be questioned.

Experimental Research Design Example

In an experimental design, a researcher gathers plant samples and then randomly assigns half the samples to photosynthesize in sunlight and the other half to be kept in a dark box without sunlight, while controlling all the other variables (nutrients, water, soil, etc.)

By comparing their outcomes in biochemical tests, the researcher can confirm that the changes in the plants were due to the sunlight and not the other variables.

Experimental research is often the final form of a study conducted in the research process which is considered to provide conclusive and specific results. But it is not meant for every research. It involves a lot of resources, time, and money and is not easy to conduct, unless a foundation of research is built. Yet it is widely used in research institutes and commercial industries, for its most conclusive results in the scientific approach.

Have you worked on research designs? How was your experience creating an experimental design? What difficulties did you face? Do write to us or comment below and share your insights on experimental research designs!

Frequently Asked Questions

Randomization is important in an experimental research because it ensures unbiased results of the experiment. It also measures the cause-effect relationship on a particular group of interest.

Experimental research design lay the foundation of a research and structures the research to establish quality decision making process.

There are 3 types of experimental research designs. These are pre-experimental research design, true experimental research design, and quasi experimental research design.

The difference between an experimental and a quasi-experimental design are: 1. The assignment of the control group in quasi experimental research is non-random, unlike true experimental design, which is randomly assigned. 2. Experimental research group always has a control group; on the other hand, it may not be always present in quasi experimental research.

Experimental research establishes a cause-effect relationship by testing a theory or hypothesis using experimental groups or control variables. In contrast, descriptive research describes a study or a topic by defining the variables under it and answering the questions related to the same.

good and valuable

Very very good

Good presentation.

That was clearly understandable

Rate this article Cancel Reply

Your email address will not be published.

Enago Academy's Most Popular Articles

- Promoting Research

Graphical Abstracts Vs. Infographics: Best practices for using visual illustrations for increased research impact

Dr. Sarah Chen stared at her computer screen, her eyes staring at her recently published…

- Publishing Research

10 Tips to Prevent Research Papers From Being Retracted

Research paper retractions represent a critical event in the scientific community. When a published article…

- Industry News

Google Releases 2024 Scholar Metrics, Evaluates Impact of Scholarly Articles

Google has released its 2024 Scholar Metrics, assessing scholarly articles from 2019 to 2023. This…

![experimental study methods What is Academic Integrity and How to Uphold it [FREE CHECKLIST]](https://www.enago.com/academy/wp-content/uploads/2024/05/FeatureImages-59-210x136.png)

Ensuring Academic Integrity and Transparency in Academic Research: A comprehensive checklist for researchers

Academic integrity is the foundation upon which the credibility and value of scientific findings are…

- Reporting Research

How to Optimize Your Research Process: A step-by-step guide

For researchers across disciplines, the path to uncovering novel findings and insights is often filled…

Choosing the Right Analytical Approach: Thematic analysis vs. content analysis for…

Comparing Cross Sectional and Longitudinal Studies: 5 steps for choosing the right…

Sign-up to read more

Subscribe for free to get unrestricted access to all our resources on research writing and academic publishing including:

- 2000+ blog articles

- 50+ Webinars

- 10+ Expert podcasts

- 50+ Infographics

- 10+ Checklists

- Research Guides

We hate spam too. We promise to protect your privacy and never spam you.

- Plagiarism Checker

- AI Content Detector

- Academic Editing

- Publication Support Services

- Thesis Editing

- Enago Reports

- Journal Finder

- Thought Leadership

- Diversity and Inclusion

- Al in Academia

- Career Corner

- Other Resources

- Infographics

- Enago Learn

- On-Demand Webinar

- Open Access Week

- Peer Review Week

- Publication Integrity Week

- Conference Videos

- Call for speakers

- Author Training

I am looking for Editing/ Proofreading services for my manuscript Tentative date of next journal submission:

What features do you prefer in a plagiarism detector? (Select all that apply)

- Experimental Research Designs: Types, Examples & Methods

Experimental research is the most familiar type of research design for individuals in the physical sciences and a host of other fields. This is mainly because experimental research is a classical scientific experiment, similar to those performed in high school science classes.

Imagine taking 2 samples of the same plant and exposing one of them to sunlight, while the other is kept away from sunlight. Let the plant exposed to sunlight be called sample A, while the latter is called sample B.

If after the duration of the research, we find out that sample A grows and sample B dies, even though they are both regularly wetted and given the same treatment. Therefore, we can conclude that sunlight will aid growth in all similar plants.

What is Experimental Research?

Experimental research is a scientific approach to research, where one or more independent variables are manipulated and applied to one or more dependent variables to measure their effect on the latter. The effect of the independent variables on the dependent variables is usually observed and recorded over some time, to aid researchers in drawing a reasonable conclusion regarding the relationship between these 2 variable types.

The experimental research method is widely used in physical and social sciences, psychology, and education. It is based on the comparison between two or more groups with a straightforward logic, which may, however, be difficult to execute.

Mostly related to a laboratory test procedure, experimental research designs involve collecting quantitative data and performing statistical analysis on them during research. Therefore, making it an example of quantitative research method .

What are The Types of Experimental Research Design?

The types of experimental research design are determined by the way the researcher assigns subjects to different conditions and groups. They are of 3 types, namely; pre-experimental, quasi-experimental, and true experimental research.

Pre-experimental Research Design

In pre-experimental research design, either a group or various dependent groups are observed for the effect of the application of an independent variable which is presumed to cause change. It is the simplest form of experimental research design and is treated with no control group.

Although very practical, experimental research is lacking in several areas of the true-experimental criteria. The pre-experimental research design is further divided into three types

- One-shot Case Study Research Design

In this type of experimental study, only one dependent group or variable is considered. The study is carried out after some treatment which was presumed to cause change, making it a posttest study.

- One-group Pretest-posttest Research Design:

This research design combines both posttest and pretest study by carrying out a test on a single group before the treatment is administered and after the treatment is administered. With the former being administered at the beginning of treatment and later at the end.

- Static-group Comparison:

In a static-group comparison study, 2 or more groups are placed under observation, where only one of the groups is subjected to some treatment while the other groups are held static. All the groups are post-tested, and the observed differences between the groups are assumed to be a result of the treatment.

Quasi-experimental Research Design

The word “quasi” means partial, half, or pseudo. Therefore, the quasi-experimental research bearing a resemblance to the true experimental research, but not the same. In quasi-experiments, the participants are not randomly assigned, and as such, they are used in settings where randomization is difficult or impossible.

This is very common in educational research, where administrators are unwilling to allow the random selection of students for experimental samples.

Some examples of quasi-experimental research design include; the time series, no equivalent control group design, and the counterbalanced design.

True Experimental Research Design

The true experimental research design relies on statistical analysis to approve or disprove a hypothesis. It is the most accurate type of experimental design and may be carried out with or without a pretest on at least 2 randomly assigned dependent subjects.

The true experimental research design must contain a control group, a variable that can be manipulated by the researcher, and the distribution must be random. The classification of true experimental design include:

- The posttest-only Control Group Design: In this design, subjects are randomly selected and assigned to the 2 groups (control and experimental), and only the experimental group is treated. After close observation, both groups are post-tested, and a conclusion is drawn from the difference between these groups.

- The pretest-posttest Control Group Design: For this control group design, subjects are randomly assigned to the 2 groups, both are presented, but only the experimental group is treated. After close observation, both groups are post-tested to measure the degree of change in each group.

- Solomon four-group Design: This is the combination of the pretest-only and the pretest-posttest control groups. In this case, the randomly selected subjects are placed into 4 groups.

The first two of these groups are tested using the posttest-only method, while the other two are tested using the pretest-posttest method.

Examples of Experimental Research

Experimental research examples are different, depending on the type of experimental research design that is being considered. The most basic example of experimental research is laboratory experiments, which may differ in nature depending on the subject of research.

Administering Exams After The End of Semester

During the semester, students in a class are lectured on particular courses and an exam is administered at the end of the semester. In this case, the students are the subjects or dependent variables while the lectures are the independent variables treated on the subjects.

Only one group of carefully selected subjects are considered in this research, making it a pre-experimental research design example. We will also notice that tests are only carried out at the end of the semester, and not at the beginning.

Further making it easy for us to conclude that it is a one-shot case study research.

Employee Skill Evaluation

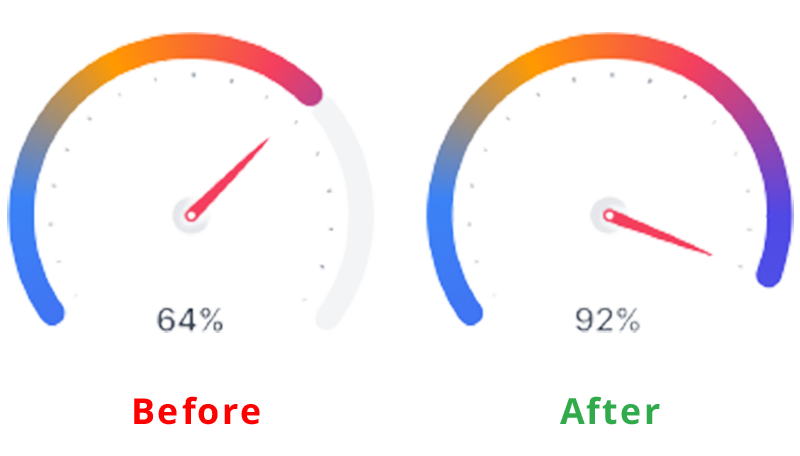

Before employing a job seeker, organizations conduct tests that are used to screen out less qualified candidates from the pool of qualified applicants. This way, organizations can determine an employee’s skill set at the point of employment.

In the course of employment, organizations also carry out employee training to improve employee productivity and generally grow the organization. Further evaluation is carried out at the end of each training to test the impact of the training on employee skills, and test for improvement.

Here, the subject is the employee, while the treatment is the training conducted. This is a pretest-posttest control group experimental research example.

Evaluation of Teaching Method

Let us consider an academic institution that wants to evaluate the teaching method of 2 teachers to determine which is best. Imagine a case whereby the students assigned to each teacher is carefully selected probably due to personal request by parents or due to stubbornness and smartness.

This is a no equivalent group design example because the samples are not equal. By evaluating the effectiveness of each teacher’s teaching method this way, we may conclude after a post-test has been carried out.

However, this may be influenced by factors like the natural sweetness of a student. For example, a very smart student will grab more easily than his or her peers irrespective of the method of teaching.

What are the Characteristics of Experimental Research?

Experimental research contains dependent, independent and extraneous variables. The dependent variables are the variables being treated or manipulated and are sometimes called the subject of the research.

The independent variables are the experimental treatment being exerted on the dependent variables. Extraneous variables, on the other hand, are other factors affecting the experiment that may also contribute to the change.

The setting is where the experiment is carried out. Many experiments are carried out in the laboratory, where control can be exerted on the extraneous variables, thereby eliminating them.

Other experiments are carried out in a less controllable setting. The choice of setting used in research depends on the nature of the experiment being carried out.

- Multivariable

Experimental research may include multiple independent variables, e.g. time, skills, test scores, etc.

Why Use Experimental Research Design?

Experimental research design can be majorly used in physical sciences, social sciences, education, and psychology. It is used to make predictions and draw conclusions on a subject matter.

Some uses of experimental research design are highlighted below.

- Medicine: Experimental research is used to provide the proper treatment for diseases. In most cases, rather than directly using patients as the research subject, researchers take a sample of the bacteria from the patient’s body and are treated with the developed antibacterial

The changes observed during this period are recorded and evaluated to determine its effectiveness. This process can be carried out using different experimental research methods.

- Education: Asides from science subjects like Chemistry and Physics which involves teaching students how to perform experimental research, it can also be used in improving the standard of an academic institution. This includes testing students’ knowledge on different topics, coming up with better teaching methods, and the implementation of other programs that will aid student learning.

- Human Behavior: Social scientists are the ones who mostly use experimental research to test human behaviour. For example, consider 2 people randomly chosen to be the subject of the social interaction research where one person is placed in a room without human interaction for 1 year.

The other person is placed in a room with a few other people, enjoying human interaction. There will be a difference in their behaviour at the end of the experiment.

- UI/UX: During the product development phase, one of the major aims of the product team is to create a great user experience with the product. Therefore, before launching the final product design, potential are brought in to interact with the product.

For example, when finding it difficult to choose how to position a button or feature on the app interface, a random sample of product testers are allowed to test the 2 samples and how the button positioning influences the user interaction is recorded.

What are the Disadvantages of Experimental Research?

- It is highly prone to human error due to its dependency on variable control which may not be properly implemented. These errors could eliminate the validity of the experiment and the research being conducted.

- Exerting control of extraneous variables may create unrealistic situations. Eliminating real-life variables will result in inaccurate conclusions. This may also result in researchers controlling the variables to suit his or her personal preferences.

- It is a time-consuming process. So much time is spent on testing dependent variables and waiting for the effect of the manipulation of dependent variables to manifest.

- It is expensive.

- It is very risky and may have ethical complications that cannot be ignored. This is common in medical research, where failed trials may lead to a patient’s death or a deteriorating health condition.

- Experimental research results are not descriptive.

- Response bias can also be supplied by the subject of the conversation.

- Human responses in experimental research can be difficult to measure.

What are the Data Collection Methods in Experimental Research?

Data collection methods in experimental research are the different ways in which data can be collected for experimental research. They are used in different cases, depending on the type of research being carried out.

1. Observational Study

This type of study is carried out over a long period. It measures and observes the variables of interest without changing existing conditions.

When researching the effect of social interaction on human behavior, the subjects who are placed in 2 different environments are observed throughout the research. No matter the kind of absurd behavior that is exhibited by the subject during this period, its condition will not be changed.

This may be a very risky thing to do in medical cases because it may lead to death or worse medical conditions.

2. Simulations

This procedure uses mathematical, physical, or computer models to replicate a real-life process or situation. It is frequently used when the actual situation is too expensive, dangerous, or impractical to replicate in real life.

This method is commonly used in engineering and operational research for learning purposes and sometimes as a tool to estimate possible outcomes of real research. Some common situation software are Simulink, MATLAB, and Simul8.

Not all kinds of experimental research can be carried out using simulation as a data collection tool . It is very impractical for a lot of laboratory-based research that involves chemical processes.

A survey is a tool used to gather relevant data about the characteristics of a population and is one of the most common data collection tools. A survey consists of a group of questions prepared by the researcher, to be answered by the research subject.

Surveys can be shared with the respondents both physically and electronically. When collecting data through surveys, the kind of data collected depends on the respondent, and researchers have limited control over it.

Formplus is the best tool for collecting experimental data using survey s. It has relevant features that will aid the data collection process and can also be used in other aspects of experimental research.

Differences between Experimental and Non-Experimental Research

1. In experimental research, the researcher can control and manipulate the environment of the research, including the predictor variable which can be changed. On the other hand, non-experimental research cannot be controlled or manipulated by the researcher at will.

This is because it takes place in a real-life setting, where extraneous variables cannot be eliminated. Therefore, it is more difficult to conclude non-experimental studies, even though they are much more flexible and allow for a greater range of study fields.

2. The relationship between cause and effect cannot be established in non-experimental research, while it can be established in experimental research. This may be because many extraneous variables also influence the changes in the research subject, making it difficult to point at a particular variable as the cause of a particular change

3. Independent variables are not introduced, withdrawn, or manipulated in non-experimental designs, but the same may not be said about experimental research.

Experimental Research vs. Alternatives and When to Use Them

1. experimental research vs causal comparative.

Experimental research enables you to control variables and identify how the independent variable affects the dependent variable. Causal-comparative find out the cause-and-effect relationship between the variables by comparing already existing groups that are affected differently by the independent variable.

For example, in an experiment to see how K-12 education affects children and teenager development. An experimental research would split the children into groups, some would get formal K-12 education, while others won’t. This is not ethically right because every child has the right to education. So, what we do instead would be to compare already existing groups of children who are getting formal education with those who due to some circumstances can not.

Pros and Cons of Experimental vs Causal-Comparative Research

- Causal-Comparative: Strengths: More realistic than experiments, can be conducted in real-world settings. Weaknesses: Establishing causality can be weaker due to the lack of manipulation.

2. Experimental Research vs Correlational Research

When experimenting, you are trying to establish a cause-and-effect relationship between different variables. For example, you are trying to establish the effect of heat on water, the temperature keeps changing (independent variable) and you see how it affects the water (dependent variable).

For correlational research, you are not necessarily interested in the why or the cause-and-effect relationship between the variables, you are focusing on the relationship. Using the same water and temperature example, you are only interested in the fact that they change, you are not investigating which of the variables or other variables causes them to change.

Pros and Cons of Experimental vs Correlational Research

3. experimental research vs descriptive research.

With experimental research, you alter the independent variable to see how it affects the dependent variable, but with descriptive research you are simply studying the characteristics of the variable you are studying.

So, in an experiment to see how blown glass reacts to temperature, experimental research would keep altering the temperature to varying levels of high and low to see how it affects the dependent variable (glass). But descriptive research would investigate the glass properties.

Pros and Cons of Experimental vs Descriptive Research

4. experimental research vs action research.

Experimental research tests for causal relationships by focusing on one independent variable vs the dependent variable and keeps other variables constant. So, you are testing hypotheses and using the information from the research to contribute to knowledge.

However, with action research, you are using a real-world setting which means you are not controlling variables. You are also performing the research to solve actual problems and improve already established practices.

For example, if you are testing for how long commutes affect workers’ productivity. With experimental research, you would vary the length of commute to see how the time affects work. But with action research, you would account for other factors such as weather, commute route, nutrition, etc. Also, experimental research helps know the relationship between commute time and productivity, while action research helps you look for ways to improve productivity

Pros and Cons of Experimental vs Action Research

Conclusion .

Experimental research designs are often considered to be the standard in research designs. This is partly due to the common misconception that research is equivalent to scientific experiments—a component of experimental research design.

In this research design, one or more subjects or dependent variables are randomly assigned to different treatments (i.e. independent variables manipulated by the researcher) and the results are observed to conclude. One of the uniqueness of experimental research is in its ability to control the effect of extraneous variables.

Experimental research is suitable for research whose goal is to examine cause-effect relationships, e.g. explanatory research. It can be conducted in the laboratory or field settings, depending on the aim of the research that is being carried out.

Connect to Formplus, Get Started Now - It's Free!

- examples of experimental research

- experimental research methods

- types of experimental research

- busayo.longe

You may also like:

What is Experimenter Bias? Definition, Types & Mitigation

In this article, we will look into the concept of experimental bias and how it can be identified in your research

Response vs Explanatory Variables: Definition & Examples

In this article, we’ll be comparing the two types of variables, what they both mean and see some of their real-life applications in research

Experimental Vs Non-Experimental Research: 15 Key Differences

Differences between experimental and non experimental research on definitions, types, examples, data collection tools, uses, advantages etc.

Simpson’s Paradox & How to Avoid it in Experimental Research

In this article, we are going to look at Simpson’s Paradox from its historical point and later, we’ll consider its effect in...

Formplus - For Seamless Data Collection

Collect data the right way with a versatile data collection tool. try formplus and transform your work productivity today..

- Skip to main content

- Skip to primary sidebar

- Skip to footer

- QuestionPro

- Solutions Industries Gaming Automotive Sports and events Education Government Travel & Hospitality Financial Services Healthcare Cannabis Technology Use Case AskWhy Communities Audience Contactless surveys Mobile LivePolls Member Experience GDPR Positive People Science 360 Feedback Surveys

- Resources Blog eBooks Survey Templates Case Studies Training Help center

Home Market Research

Experimental Research: What it is + Types of designs

Any research conducted under scientifically acceptable conditions uses experimental methods. The success of experimental studies hinges on researchers confirming the change of a variable is based solely on the manipulation of the constant variable. The research should establish a notable cause and effect.

What is Experimental Research?

Experimental research is a study conducted with a scientific approach using two sets of variables. The first set acts as a constant, which you use to measure the differences of the second set. Quantitative research methods , for example, are experimental.

If you don’t have enough data to support your decisions, you must first determine the facts. This research gathers the data necessary to help you make better decisions.

You can conduct experimental research in the following situations:

- Time is a vital factor in establishing a relationship between cause and effect.

- Invariable behavior between cause and effect.

- You wish to understand the importance of cause and effect.

Experimental Research Design Types

The classic experimental design definition is: “The methods used to collect data in experimental studies.”

There are three primary types of experimental design:

- Pre-experimental research design

- True experimental research design

- Quasi-experimental research design

The way you classify research subjects based on conditions or groups determines the type of research design you should use.

0 1. Pre-Experimental Design

A group, or various groups, are kept under observation after implementing cause and effect factors. You’ll conduct this research to understand whether further investigation is necessary for these particular groups.

You can break down pre-experimental research further into three types:

- One-shot Case Study Research Design

- One-group Pretest-posttest Research Design

- Static-group Comparison

0 2. True Experimental Design

It relies on statistical analysis to prove or disprove a hypothesis, making it the most accurate form of research. Of the types of experimental design, only true design can establish a cause-effect relationship within a group. In a true experiment, three factors need to be satisfied:

- There is a Control Group, which won’t be subject to changes, and an Experimental Group, which will experience the changed variables.

- A variable that can be manipulated by the researcher

- Random distribution

This experimental research method commonly occurs in the physical sciences.

0 3. Quasi-Experimental Design

The word “Quasi” indicates similarity. A quasi-experimental design is similar to an experimental one, but it is not the same. The difference between the two is the assignment of a control group. In this research, an independent variable is manipulated, but the participants of a group are not randomly assigned. Quasi-research is used in field settings where random assignment is either irrelevant or not required.

Importance of Experimental Design

Experimental research is a powerful tool for understanding cause-and-effect relationships. It allows us to manipulate variables and observe the effects, which is crucial for understanding how different factors influence the outcome of a study.

But the importance of experimental research goes beyond that. It’s a critical method for many scientific and academic studies. It allows us to test theories, develop new products, and make groundbreaking discoveries.

For example, this research is essential for developing new drugs and medical treatments. Researchers can understand how a new drug works by manipulating dosage and administration variables and identifying potential side effects.

Similarly, experimental research is used in the field of psychology to test theories and understand human behavior. By manipulating variables such as stimuli, researchers can gain insights into how the brain works and identify new treatment options for mental health disorders.

It is also widely used in the field of education. It allows educators to test new teaching methods and identify what works best. By manipulating variables such as class size, teaching style, and curriculum, researchers can understand how students learn and identify new ways to improve educational outcomes.

In addition, experimental research is a powerful tool for businesses and organizations. By manipulating variables such as marketing strategies, product design, and customer service, companies can understand what works best and identify new opportunities for growth.

Advantages of Experimental Research

When talking about this research, we can think of human life. Babies do their own rudimentary experiments (such as putting objects in their mouths) to learn about the world around them, while older children and teens do experiments at school to learn more about science.

Ancient scientists used this research to prove that their hypotheses were correct. For example, Galileo Galilei and Antoine Lavoisier conducted various experiments to discover key concepts in physics and chemistry. The same is true of modern experts, who use this scientific method to see if new drugs are effective, discover treatments for diseases, and create new electronic devices (among others).

It’s vital to test new ideas or theories. Why put time, effort, and funding into something that may not work?

This research allows you to test your idea in a controlled environment before marketing. It also provides the best method to test your theory thanks to the following advantages:

- Researchers have a stronger hold over variables to obtain desired results.

- The subject or industry does not impact the effectiveness of experimental research. Any industry can implement it for research purposes.

- The results are specific.

- After analyzing the results, you can apply your findings to similar ideas or situations.

- You can identify the cause and effect of a hypothesis. Researchers can further analyze this relationship to determine more in-depth ideas.

- Experimental research makes an ideal starting point. The data you collect is a foundation for building more ideas and conducting more action research .

Whether you want to know how the public will react to a new product or if a certain food increases the chance of disease, experimental research is the best place to start. Begin your research by finding subjects using QuestionPro Audience and other tools today.

LEARN MORE FREE TRIAL

MORE LIKE THIS

Companies are losing $ billions with gaps in market research – are you?

Dec 18, 2024

CultureAmp vs Qualtrics: The Best Employee Experience Platform

Dec 16, 2024

Data Quality Dimensions: What are They & How to Improve

Dec 10, 2024

NPS Analysis: Boosting Customer Retention and Satisfaction

Dec 9, 2024

Other categories

- Academic Research

- Artificial Intelligence

- Assessments

- Brand Awareness

- Case Studies

- Communities

- Consumer Insights

- Customer effort score

- Customer Engagement

- Customer Experience

- Customer Loyalty

- Customer Research

- Customer Satisfaction

- Employee Benefits

- Employee Engagement

- Employee Retention

- Friday Five

- General Data Protection Regulation

- Insights Hub

- Life@QuestionPro

- Market Research

- Mobile diaries

- Mobile Surveys

- New Features

- Online Communities

- Question Types

- Questionnaire

- QuestionPro Products

- Release Notes

- Research Tools and Apps

- Revenue at Risk

- Survey Templates

- Training Tips

- Tuesday CX Thoughts (TCXT)

- Uncategorized

- What’s Coming Up

- Workforce Intelligence

- How it works

"Christmas Offer"

Terms & conditions.

As the Christmas season is upon us, we find ourselves reflecting on the past year and those who we have helped to shape their future. It’s been quite a year for us all! The end of the year brings no greater joy than the opportunity to express to you Christmas greetings and good wishes.

At this special time of year, Research Prospect brings joyful discount of 10% on all its services. May your Christmas and New Year be filled with joy.

We are looking back with appreciation for your loyalty and looking forward to moving into the New Year together.

"Claim this offer"

In unfamiliar and hard times, we have stuck by you. This Christmas, Research Prospect brings you all the joy with exciting discount of 10% on all its services.

Offer valid till 5-1-2024

We love being your partner in success. We know you have been working hard lately, take a break this holiday season to spend time with your loved ones while we make sure you succeed in your academics

Discount code: RP0996Y

A Complete Guide to Experimental Research

Published by Carmen Troy at August 14th, 2021 , Revised On August 25, 2023

A Quick Guide to Experimental Research

Experimental research refers to the experiments conducted in the laboratory or observation under controlled conditions. Researchers try to find out the cause-and-effect relationship between two or more variables.

The subjects/participants in the experiment are selected and observed. They receive treatments such as changes in room temperature, diet, atmosphere, or given a new drug to observe the changes. Experiments can vary from personal and informal natural comparisons. It includes three types of variables ;

- Independent variable

- Dependent variable

- Controlled variable

Before conducting experimental research, you need to have a clear understanding of the experimental design. A true experimental design includes identifying a problem , formulating a hypothesis , determining the number of variables, selecting and assigning the participants, types of research designs , meeting ethical values, etc.

There are many types of research methods that can be classified based on:

- The nature of the problem to be studied

- Number of participants (individual or groups)

- Number of groups involved (Single group or multiple groups)

- Types of data collection methods (Qualitative/Quantitative/Mixed methods)

- Number of variables (single independent variable/ factorial two independent variables)

- The experimental design

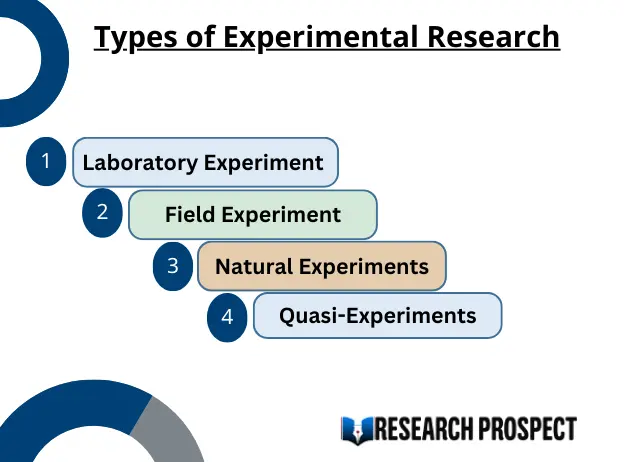

Types of Experimental Research

Laboratory Experiment

It is also called experimental research. This type of research is conducted in the laboratory. A researcher can manipulate and control the variables of the experiment.

Example: Milgram’s experiment on obedience.

Field Experiment

Field experiments are conducted in the participants’ open field and the environment by incorporating a few artificial changes. Researchers do not have control over variables under measurement. Participants know that they are taking part in the experiment.

Natural Experiments

The experiment is conducted in the natural environment of the participants. The participants are generally not informed about the experiment being conducted on them.

Examples: Estimating the health condition of the population. Did the increase in tobacco prices decrease the sale of tobacco? Did the usage of helmets decrease the number of head injuries of the bikers?

Quasi-Experiments

A quasi-experiment is an experiment that takes advantage of natural occurrences. Researchers cannot assign random participants to groups.

Example: Comparing the academic performance of the two schools.

Does your Research Methodology Have the Following?

- Great Research/Sources

- Perfect Language

- Accurate Sources

If not, we can help. Our panel of experts makes sure to keep the 3 pillars of Research Methodology strong.

How to Conduct Experimental Research?

Step 1. identify and define the problem.

You need to identify a problem as per your field of study and describe your research question .

Example: You want to know about the effects of social media on the behavior of youngsters. It would help if you found out how much time students spend on the internet daily.

Example: You want to find out the adverse effects of junk food on human health. It would help if you found out how junk food frequent consumption can affect an individual’s health.

Step 2. Determine the Number of Levels of Variables

You need to determine the number of variables . The independent variable is the predictor and manipulated by the researcher. At the same time, the dependent variable is the result of the independent variable.

In the first example, we predicted that increased social media usage negatively correlates with youngsters’ negative behaviour.

In the second example, we predicted the positive correlation between a balanced diet and a good healthy and negative relationship between junk food consumption and multiple health issues.

Step 3. Formulate the Hypothesis

One of the essential aspects of experimental research is formulating a hypothesis . A researcher studies the cause and effect between the independent and dependent variables and eliminates the confounding variables. A null hypothesis is when there is no significant relationship between the dependent variable and the participants’ independent variables. A researcher aims to disprove the theory. H0 denotes it. The Alternative hypothesis is the theory that a researcher seeks to prove. H1or HA denotes it.

Why should you use a Plagiarism Detector for your Paper?

It ensures:

- Original work

- Structure and Clarity

- Zero Spelling Errors

- No Punctuation Faults

Step 4. Selection and Assignment of the Subjects

It’s an essential feature that differentiates the experimental design from other research designs . You need to select the number of participants based on the requirements of your experiment. Then the participants are assigned to the treatment group. There should be a control group without any treatment to study the outcomes without applying any changes compared to the experimental group.

Randomisation: The participants are selected randomly and assigned to the experimental group. It is known as probability sampling. If the selection is not random, it’s considered non-probability sampling.

Stratified sampling : It’s a type of random selection of the participants by dividing them into strata and randomly selecting them from each level.

Matching: Even though participants are selected randomly, they can be assigned to the various comparison groups. Another procedure for selecting the participants is ‘matching.’ The participants are selected from the controlled group to match the experimental groups’ participants in all aspects based on the dependent variables.

What is Replicability?

When a researcher uses the same methodology and subject groups to carry out the experiments, it’s called ‘replicability.’ The results will be similar each time. Researchers usually replicate their own work to strengthen external validity.

Step 5. Select a Research Design

You need to select a research design according to the requirements of your experiment. There are many types of experimental designs as follows.

Step 6. Meet Ethical and Legal Requirements

- Participants of the research should not be harmed.

- The dignity and confidentiality of the research should be maintained.

- The consent of the participants should be taken before experimenting.

- The privacy of the participants should be ensured.

- Research data should remain confidential.

- The anonymity of the participants should be ensured.

- The rules and objectives of the experiments should be followed strictly.

- Any wrong information or data should be avoided.

Tips for Meeting the Ethical Considerations

To meet the ethical considerations, you need to ensure that.

- Participants have the right to withdraw from the experiment.

- They should be aware of the required information about the experiment.

- It would help if you avoided offensive or unacceptable language while framing the questions of interviews, questionnaires, or Focus groups.

- You should ensure the privacy and anonymity of the participants.

- You should acknowledge the sources and authors in your dissertation using any referencing styles such as APA/MLA/Harvard referencing style.

Step 7. Collect and Analyse Data.

Collect the data by using suitable data collection according to your experiment’s requirement, such as observations, case studies , surveys , interviews , questionnaires, etc. Analyse the obtained information.

Step 8. Present and Conclude the Findings of the Study.

Write the report of your research. Present, conclude, and explain the outcomes of your study .

Frequently Asked Questions

What is the first step in conducting an experimental research.

The first step in conducting experimental research is to define your research question or hypothesis. Clearly outline the purpose and expectations of your experiment to guide the entire research process.

You May Also Like

Content analysis is used to identify specific words, patterns, concepts, themes, phrases, or sentences within the content in the recorded communication.

Descriptive research is carried out to describe current issues, programs, and provides information about the issue through surveys and various fact-finding methods.

A case study is a detailed analysis of a situation concerning organizations, industries, and markets. The case study generally aims at identifying the weak areas.

As Featured On

USEFUL LINKS

LEARNING RESOURCES

COMPANY DETAILS

Splash Sol LLC

- How It Works

Experimental Design: Types, Examples & Methods

Saul McLeod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul McLeod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Learn about our Editorial Process

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

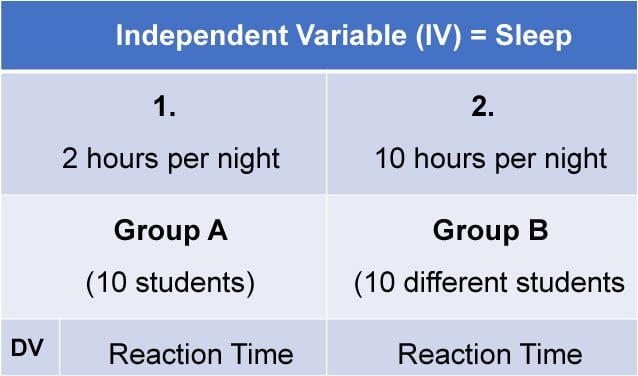

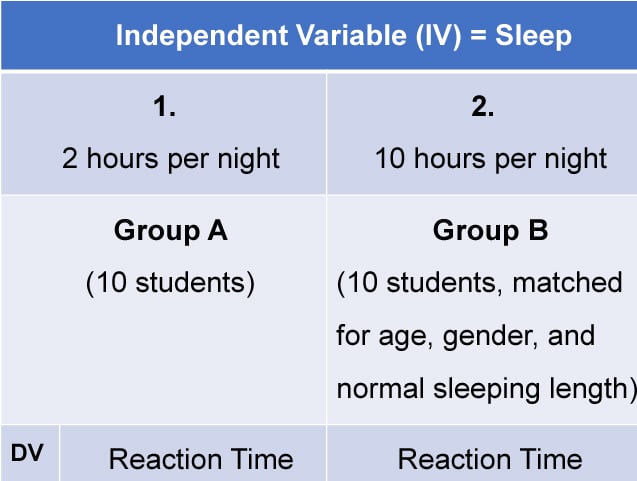

Experimental design refers to how participants are allocated to different groups in an experiment. Types of design include repeated measures, independent groups, and matched pairs designs.

Probably the most common way to design an experiment in psychology is to divide the participants into two groups, the experimental group and the control group, and then introduce a change to the experimental group, not the control group.

The researcher must decide how he/she will allocate their sample to the different experimental groups. For example, if there are 10 participants, will all 10 participants participate in both groups (e.g., repeated measures), or will the participants be split in half and take part in only one group each?

Three types of experimental designs are commonly used:

1. Independent Measures

Independent measures design, also known as between-groups , is an experimental design where different participants are used in each condition of the independent variable. This means that each condition of the experiment includes a different group of participants.

This should be done by random allocation, ensuring that each participant has an equal chance of being assigned to one group.

Independent measures involve using two separate groups of participants, one in each condition. For example:

- Con : More people are needed than with the repeated measures design (i.e., more time-consuming).

- Pro : Avoids order effects (such as practice or fatigue) as people participate in one condition only. If a person is involved in several conditions, they may become bored, tired, and fed up by the time they come to the second condition or become wise to the requirements of the experiment!

- Con : Differences between participants in the groups may affect results, for example, variations in age, gender, or social background. These differences are known as participant variables (i.e., a type of extraneous variable ).

- Control : After the participants have been recruited, they should be randomly assigned to their groups. This should ensure the groups are similar, on average (reducing participant variables).

2. Repeated Measures Design

Repeated Measures design is an experimental design where the same participants participate in each independent variable condition. This means that each experiment condition includes the same group of participants.

Repeated Measures design is also known as within-groups or within-subjects design .

- Pro : As the same participants are used in each condition, participant variables (i.e., individual differences) are reduced.

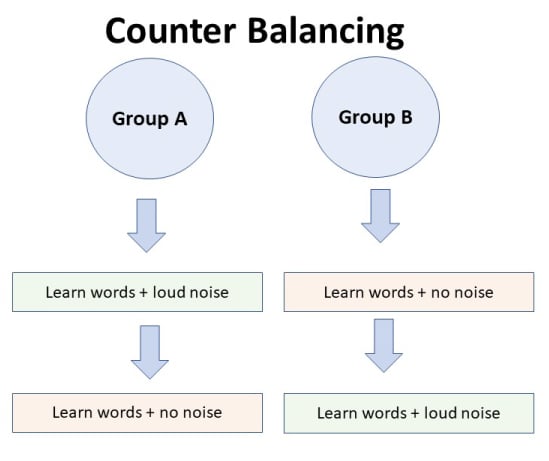

- Con : There may be order effects. Order effects refer to the order of the conditions affecting the participants’ behavior. Performance in the second condition may be better because the participants know what to do (i.e., practice effect). Or their performance might be worse in the second condition because they are tired (i.e., fatigue effect). This limitation can be controlled using counterbalancing.

- Pro : Fewer people are needed as they participate in all conditions (i.e., saves time).

- Control : To combat order effects, the researcher counter-balances the order of the conditions for the participants. Alternating the order in which participants perform in different conditions of an experiment.

Counterbalancing

Suppose we used a repeated measures design in which all of the participants first learned words in “loud noise” and then learned them in “no noise.”

We expect the participants to learn better in “no noise” because of order effects, such as practice. However, a researcher can control for order effects using counterbalancing.

The sample would be split into two groups: experimental (A) and control (B). For example, group 1 does ‘A’ then ‘B,’ and group 2 does ‘B’ then ‘A.’ This is to eliminate order effects.

Although order effects occur for each participant, they balance each other out in the results because they occur equally in both groups.

3. Matched Pairs Design

A matched pairs design is an experimental design where pairs of participants are matched in terms of key variables, such as age or socioeconomic status. One member of each pair is then placed into the experimental group and the other member into the control group .

One member of each matched pair must be randomly assigned to the experimental group and the other to the control group.

- Con : If one participant drops out, you lose 2 PPs’ data.

- Pro : Reduces participant variables because the researcher has tried to pair up the participants so that each condition has people with similar abilities and characteristics.

- Con : Very time-consuming trying to find closely matched pairs.

- Pro : It avoids order effects, so counterbalancing is not necessary.

- Con : Impossible to match people exactly unless they are identical twins!

- Control : Members of each pair should be randomly assigned to conditions. However, this does not solve all these problems.

Experimental design refers to how participants are allocated to an experiment’s different conditions (or IV levels). There are three types:

1. Independent measures / between-groups : Different participants are used in each condition of the independent variable.

2. Repeated measures /within groups : The same participants take part in each condition of the independent variable.

3. Matched pairs : Each condition uses different participants, but they are matched in terms of important characteristics, e.g., gender, age, intelligence, etc.

Learning Check

Read about each of the experiments below. For each experiment, identify (1) which experimental design was used; and (2) why the researcher might have used that design.

1 . To compare the effectiveness of two different types of therapy for depression, depressed patients were assigned to receive either cognitive therapy or behavior therapy for a 12-week period.

The researchers attempted to ensure that the patients in the two groups had similar severity of depressed symptoms by administering a standardized test of depression to each participant, then pairing them according to the severity of their symptoms.

2 . To assess the difference in reading comprehension between 7 and 9-year-olds, a researcher recruited each group from a local primary school. They were given the same passage of text to read and then asked a series of questions to assess their understanding.

3 . To assess the effectiveness of two different ways of teaching reading, a group of 5-year-olds was recruited from a primary school. Their level of reading ability was assessed, and then they were taught using scheme one for 20 weeks.

At the end of this period, their reading was reassessed, and a reading improvement score was calculated. They were then taught using scheme two for a further 20 weeks, and another reading improvement score for this period was calculated. The reading improvement scores for each child were then compared.

4 . To assess the effect of the organization on recall, a researcher randomly assigned student volunteers to two conditions.

Condition one attempted to recall a list of words that were organized into meaningful categories; condition two attempted to recall the same words, randomly grouped on the page.

Experiment Terminology

Ecological validity.

The degree to which an investigation represents real-life experiences.

Experimenter effects

These are the ways that the experimenter can accidentally influence the participant through their appearance or behavior.

Demand characteristics

The clues in an experiment lead the participants to think they know what the researcher is looking for (e.g., the experimenter’s body language).

Independent variable (IV)

The variable the experimenter manipulates (i.e., changes) is assumed to have a direct effect on the dependent variable.

Dependent variable (DV)

Variable the experimenter measures. This is the outcome (i.e., the result) of a study.

Extraneous variables (EV)

All variables which are not independent variables but could affect the results (DV) of the experiment. Extraneous variables should be controlled where possible.

Confounding variables

Variable(s) that have affected the results (DV), apart from the IV. A confounding variable could be an extraneous variable that has not been controlled.

Random Allocation

Randomly allocating participants to independent variable conditions means that all participants should have an equal chance of taking part in each condition.

The principle of random allocation is to avoid bias in how the experiment is carried out and limit the effects of participant variables.

Order effects

Changes in participants’ performance due to their repeating the same or similar test more than once. Examples of order effects include:

(i) practice effect: an improvement in performance on a task due to repetition, for example, because of familiarity with the task;

(ii) fatigue effect: a decrease in performance of a task due to repetition, for example, because of boredom or tiredness.

IMAGES